MLOps in Finance: A Strategic Guide to Scaling ML from Experiments to Production"

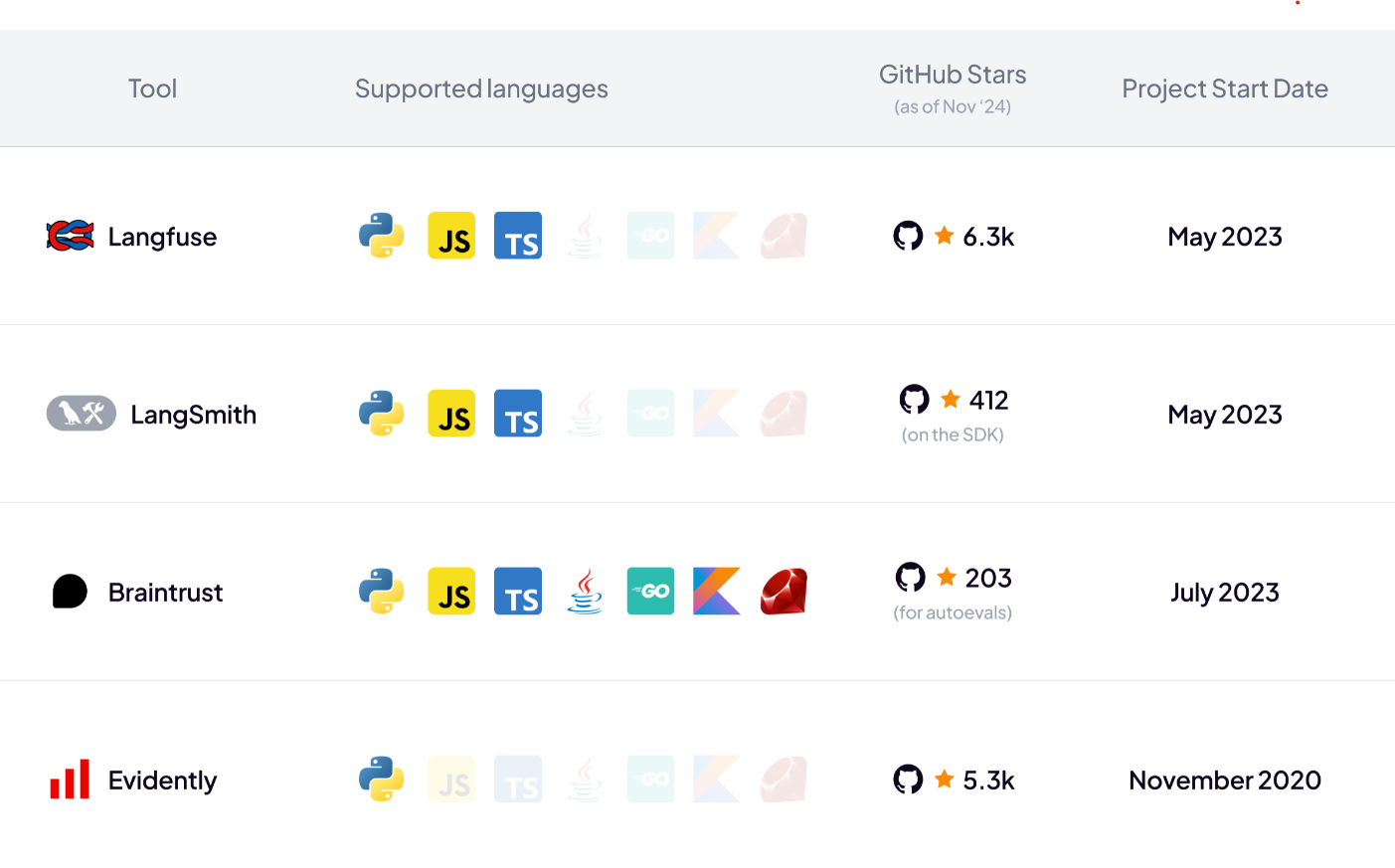

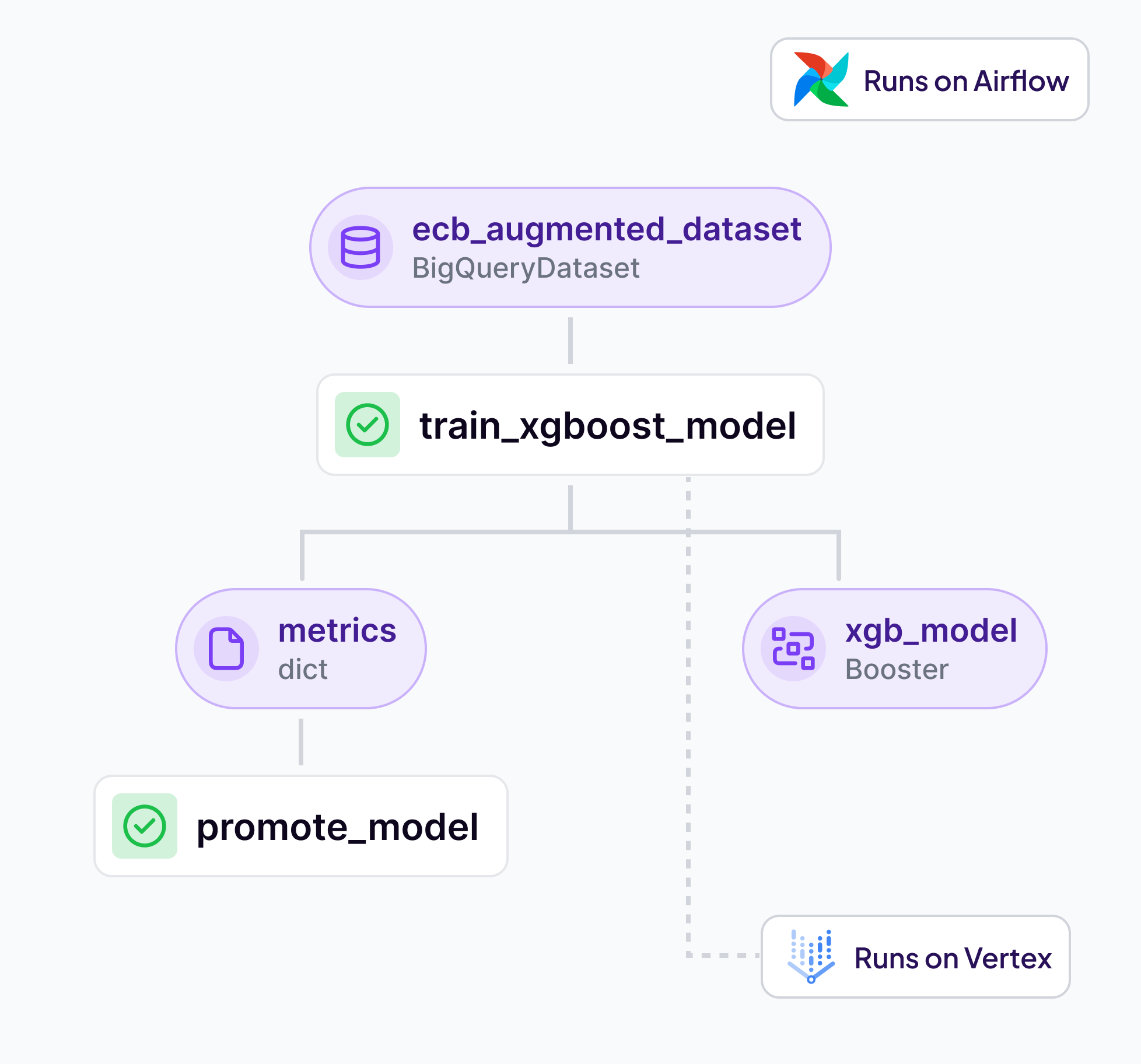

Discover how financial institutions can successfully transition their machine learning projects from experimental phases to robust production environments. This comprehensive guide explores critical challenges and strategic solutions in MLOps implementation, including regulatory compliance, team scaling, and infrastructure decisions. Learn practical approaches to building scalable ML systems while maintaining security and efficiency, with special focus on emerging technologies like RAG and their role in enterprise AI adoption. Perfect for ML practitioners, technical leaders, and decision-makers in the financial sector looking to scale their ML operations effectively.