On this page

From Experimentation to Production: Navigating the MLOps Journey in Financial Services

In the rapidly evolving landscape of machine learning operations, many organizations find themselves at a crucial crossroads: they’ve successfully experimented with ML models, but now face the challenge of scaling their operations while maintaining compliance and efficiency. This post explores common challenges and strategic considerations for teams transitioning from experimental ML projects to production-ready systems.

The Evolution of ML Team Dynamics

One of the most critical aspects of scaling ML operations is managing team growth and knowledge sharing. Many organizations start with a small, tight-knit team of ML practitioners who can “get by” with minimal MLOps infrastructure. However, as teams expand and new members join, the need for structured workflows and standardized processes becomes increasingly apparent.

Key considerations for growing ML teams include:

- Establishing standardized onboarding processes for new team members

- Creating clear documentation and knowledge sharing protocols

- Implementing version control for both code and models

- Defining clear roles and responsibilities within the ML pipeline

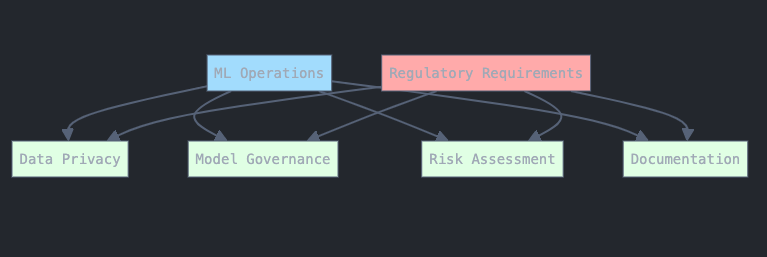

Regulatory Compliance in Financial ML

Financial services organizations face unique challenges when implementing ML systems, particularly regarding regulatory compliance and data privacy. While many companies are excited about the possibilities of Large Language Models (LLMs), careful consideration must be given to:

- Data privacy requirements and regulatory frameworks

- Model governance and auditability

- Risk assessment and mitigation strategies

- Documentation requirements for regulatory compliance

The RAG Approach: A Pragmatic Solution for Enterprise AI

Retrieval-Augmented Generation (RAG) has emerged as a practical approach for organizations looking to leverage AI capabilities while maintaining control over their data and operations. This approach offers several advantages:

- Reduced dependency on external API providers

- Better control over data privacy and security

- Ability to incorporate domain-specific knowledge

- More predictable operational costs

Scaling MLOps: When to Level Up Your Infrastructure

Many organizations struggle with determining the right time to invest in more sophisticated MLOps tools and infrastructure. Here are key indicators that it’s time to upgrade your MLOps stack:

- Increased frequency of model training and deployment

- Growing team size and complexity

- Need for better model versioning and tracking

- Requirements for enhanced collaboration and access control

- Rising costs of manual operations and maintenance

Future-Proofing Your ML Infrastructure

As the ML landscape continues to evolve, organizations need to consider how their infrastructure choices today will impact their operations tomorrow. Key considerations include:

- Cloud vendor independence

- Scalability of chosen solutions

- Flexibility to incorporate new technologies

- Cost optimization strategies

- Team growth and training requirements

Conclusion

The journey from experimental ML projects to production-ready systems is complex and multifaceted. Success requires careful consideration of team dynamics, regulatory requirements, and infrastructure choices. Organizations should focus on building flexible, scalable systems that can grow with their needs while maintaining compliance and operational efficiency.

Remember: The best time to invest in proper MLOps infrastructure isn’t when you’re already feeling the pain of scale - it’s when you can see that pain coming on the horizon.