Compare ZenML vs

ZenML vs AWS SageMaker: Supercharge Your ML Workflows

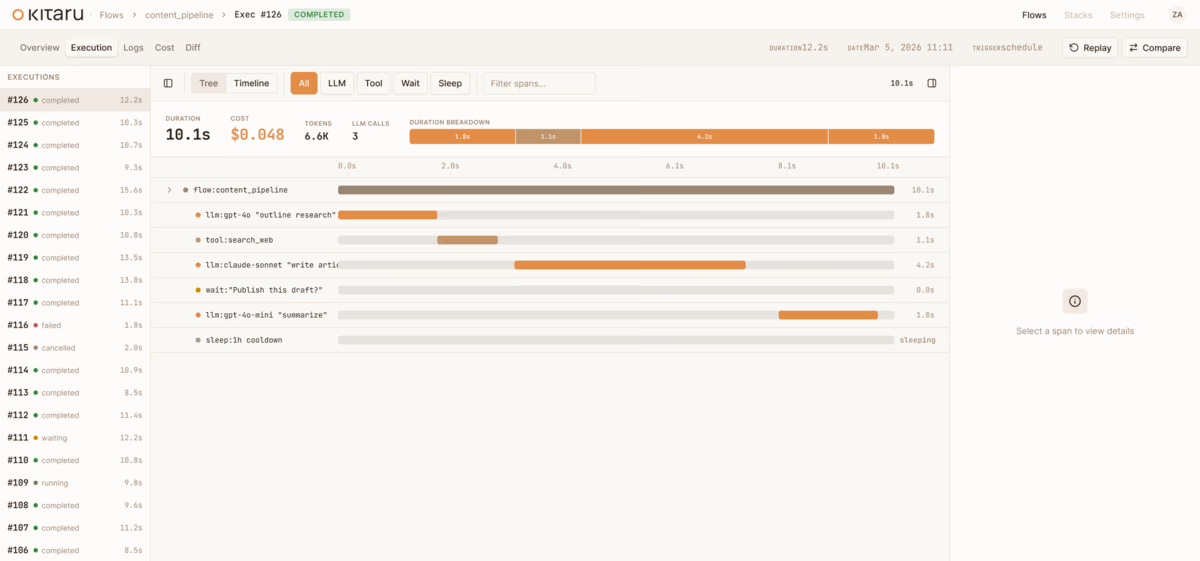

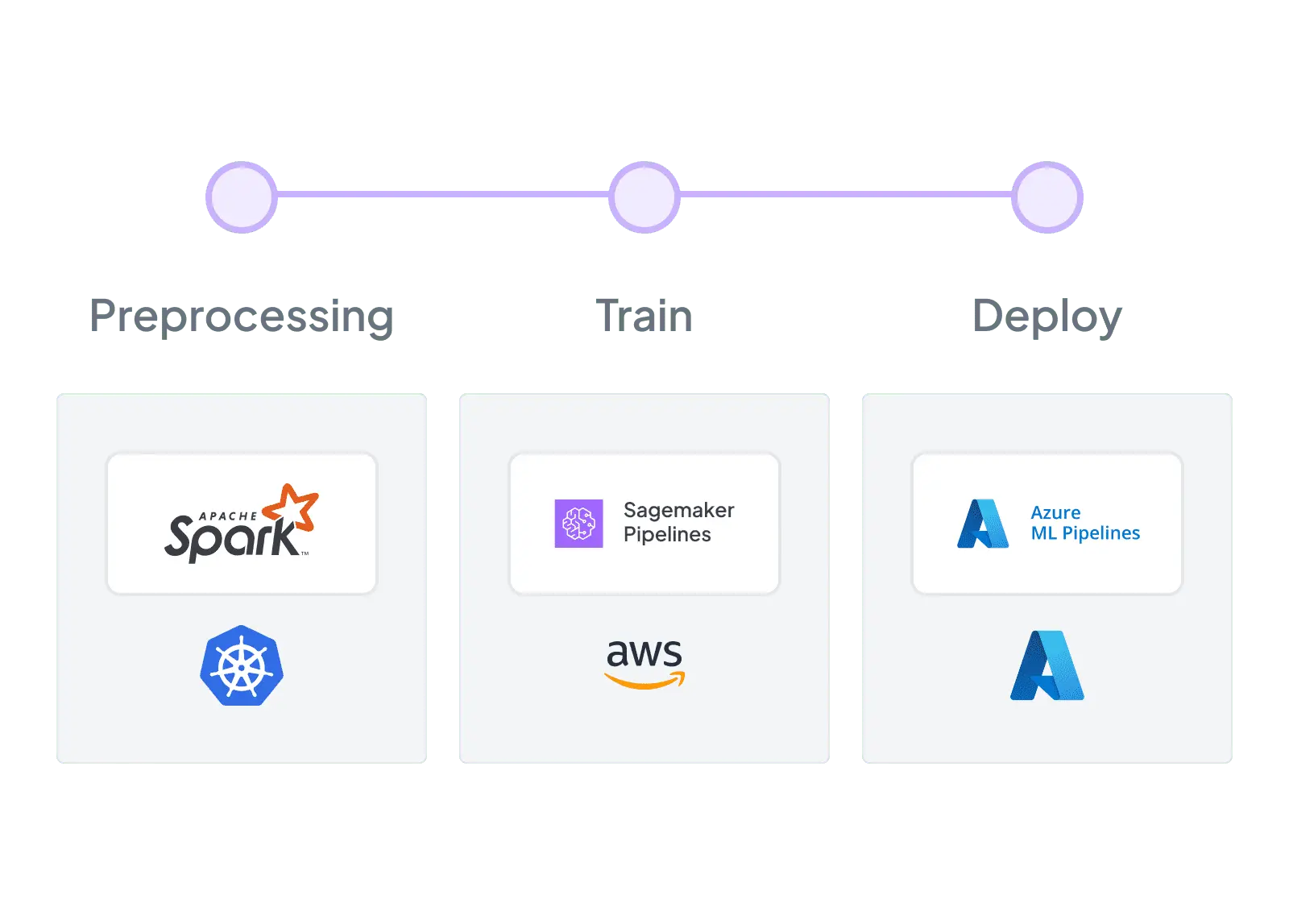

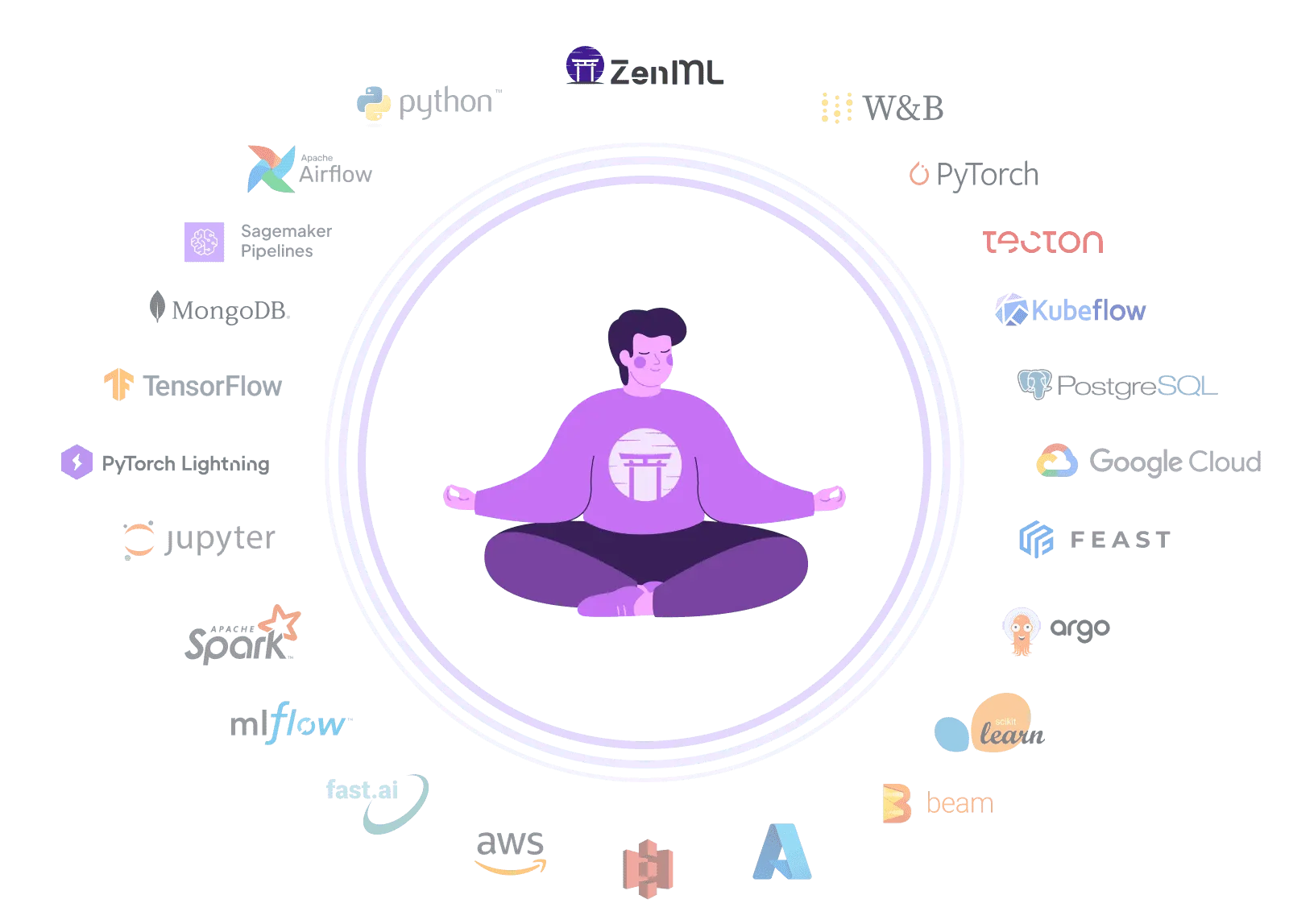

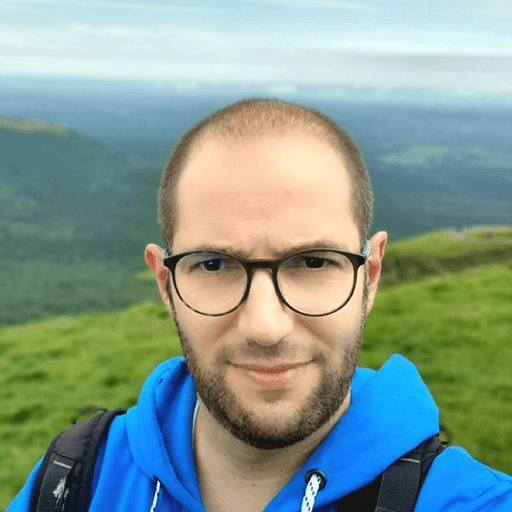

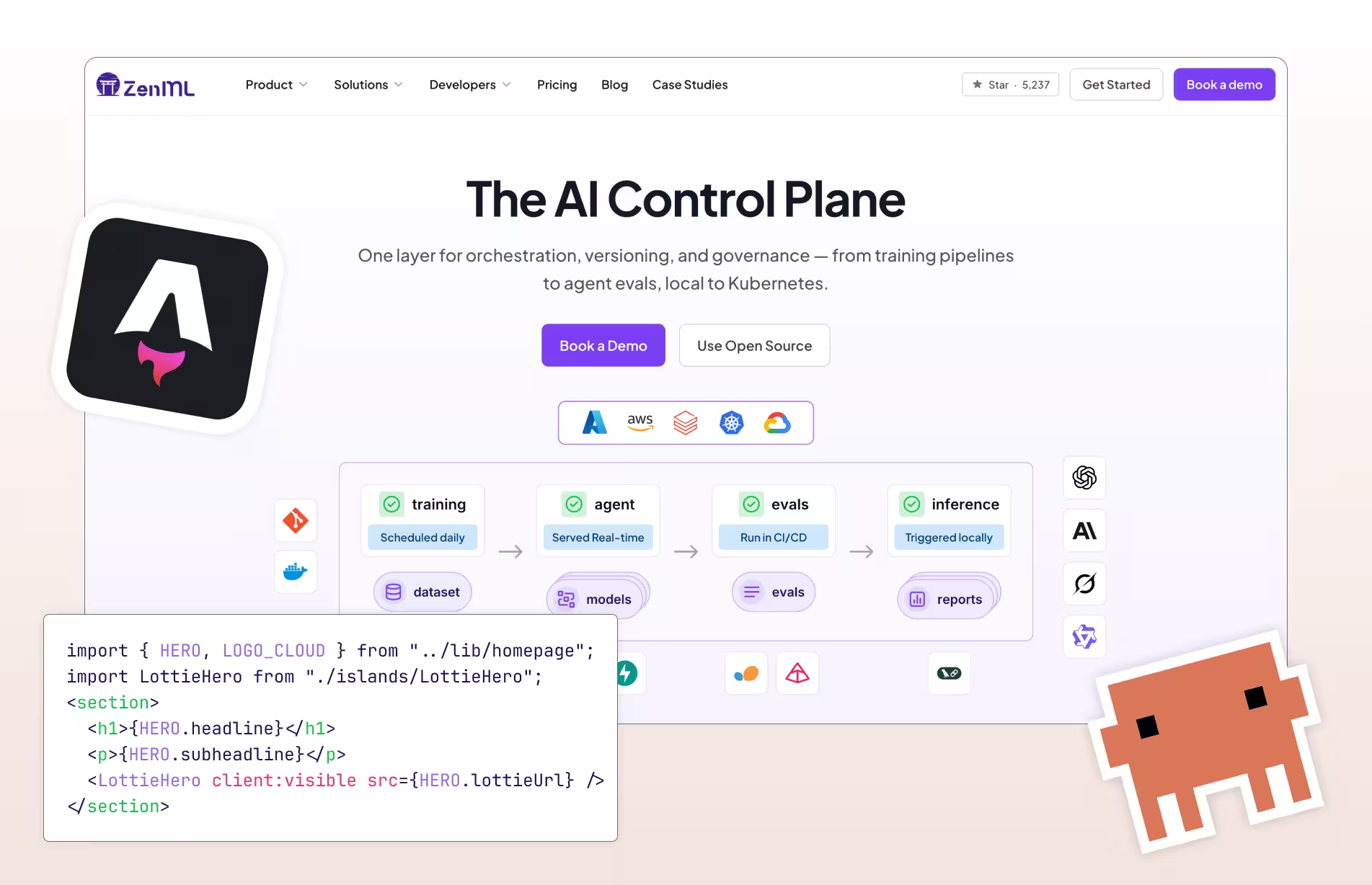

Unlock the full potential of your machine learning projects with ZenML, a flexible alternative to AWS SageMaker. While SageMaker offers a comprehensive cloud platform for ML, ZenML provides a vendor-neutral approach to building, training, and deploying high-quality models at scale. ZenML's intuitive workflow management capabilities extend beyond a single cloud provider, offering the flexibility to work across various environments and tools. Unlike SageMaker's AWS-centric ecosystem, ZenML allows you to accelerate your time-to-market and drive innovation across your organization without being locked into a specific cloud infrastructure, giving you the freedom to adapt your ML workflows as your needs evolve.