| Workflow Orchestration | Provides a flexible and user-friendly orchestration layer on top of Kubeflow | Offers powerful Kubernetes-native orchestration for ML workflows |

| Ease of Use | Simplifies the adoption and management of Kubeflow pipelines with an intuitive interface | Requires Kubernetes expertise to effectively utilize its features |

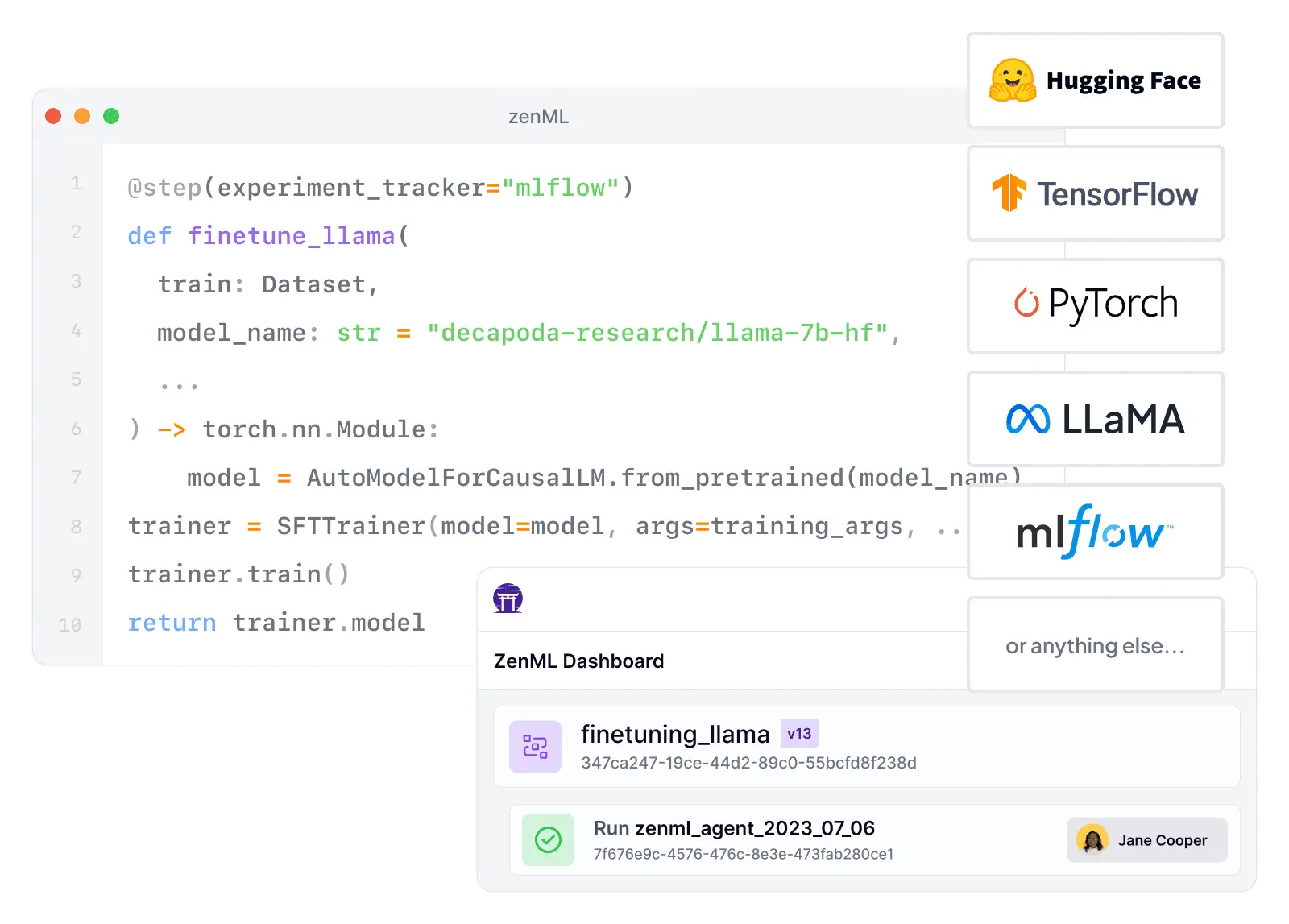

| Integration Flexibility | Seamlessly integrates Kubeflow with other MLOps tools for a customized stack | Primarily focuses on Kubernetes-based integrations and extensions |

| Switch your orchestrator, keep your code | Keep the same code when switching orchestrator | Requires significant rewriting to use Kubeflow code with a different orchestrator |

| Pipeline Customization | Enables easy customization and extension of Kubeflow pipelines | Allows customization but may require more Kubernetes knowledge |

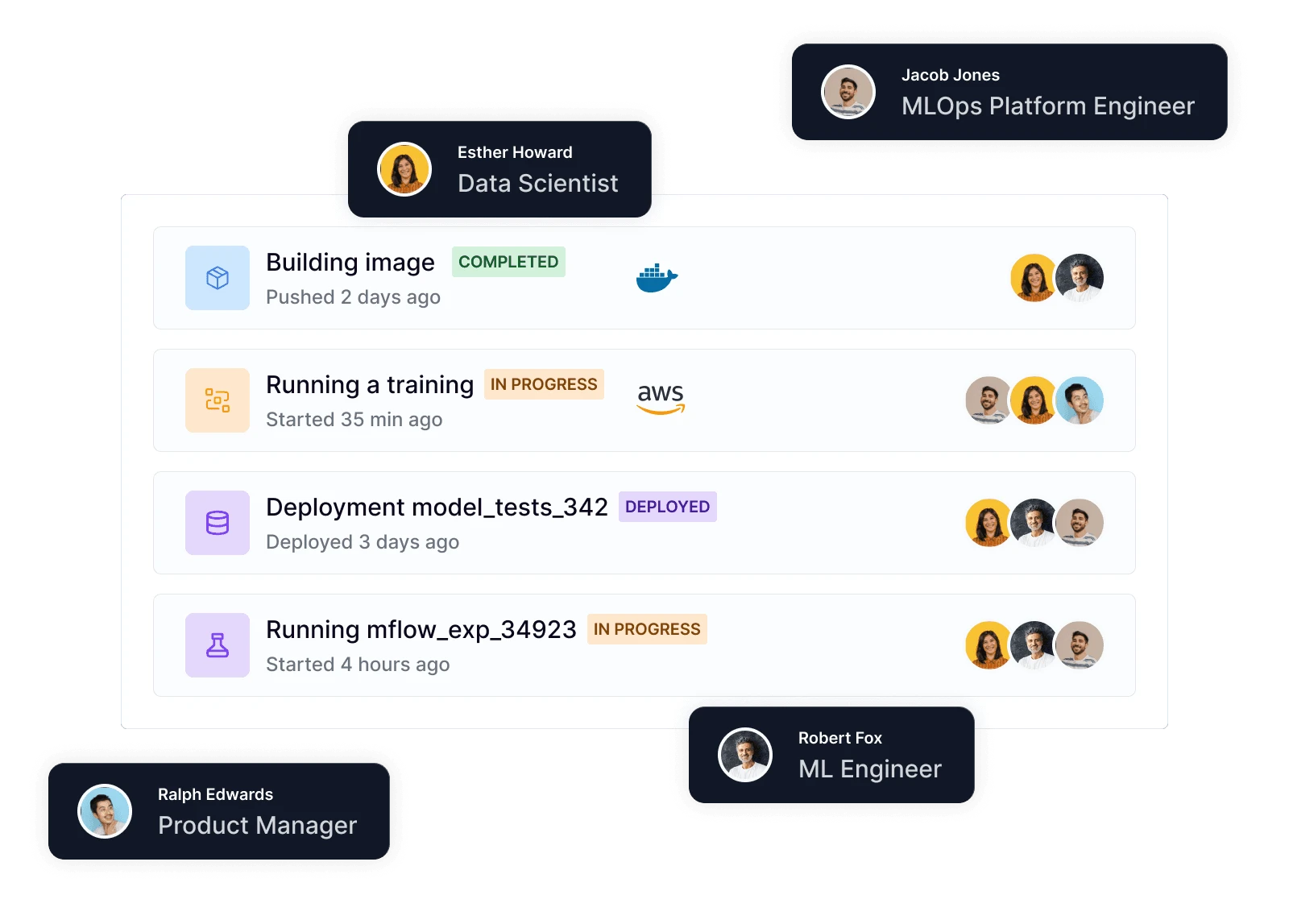

| Collaborative MLOps | Facilitates collaboration among teams with version control and governance features | Provides collaboration features but may require additional setup |

| Scalability | Leverages Kubeflow's scalability while providing an abstraction layer for ease of use | Highly scalable for large-scale ML workflows on Kubernetes |

| Experiment Tracking | Integrates with MLflow and other tools for comprehensive experiment tracking | Offers Kubeflow Metadata for experiment tracking and artifact management |

| Model Deployment | Simplifies the deployment of models using Kubeflow with pre-built integrations | Supports various deployment options, including Kubeflow Serving |

| Monitoring and Logging | Provides centralized monitoring and logging for Kubeflow pipelines | Offers Kubeflow Metadata for logging and monitoring |

| Community and Support | Growing community with active support and resources | Large and active community with extensive resources and support |

| MLOps Lifecycle Coverage | Covers the entire MLOps lifecycle, from data preparation to model monitoring | Focuses primarily on orchestration, deployment, and serving |

| Learning Curve | Reduces the learning curve for adopting Kubeflow with a user-friendly abstraction layer | Requires Kubernetes expertise to effectively utilize its full set of features |

| Hybrid and Multi-Cloud | Supports hybrid and multi-cloud deployments with Kubeflow integration | Enables hybrid and multi-cloud deployments on Kubernetes |

| GPU and Distributed Computing | Seamlessly leverages Kubeflow's GPU and distributed computing capabilities | Provides strong support for GPU and distributed computing workloads |