Compare ZenML vs

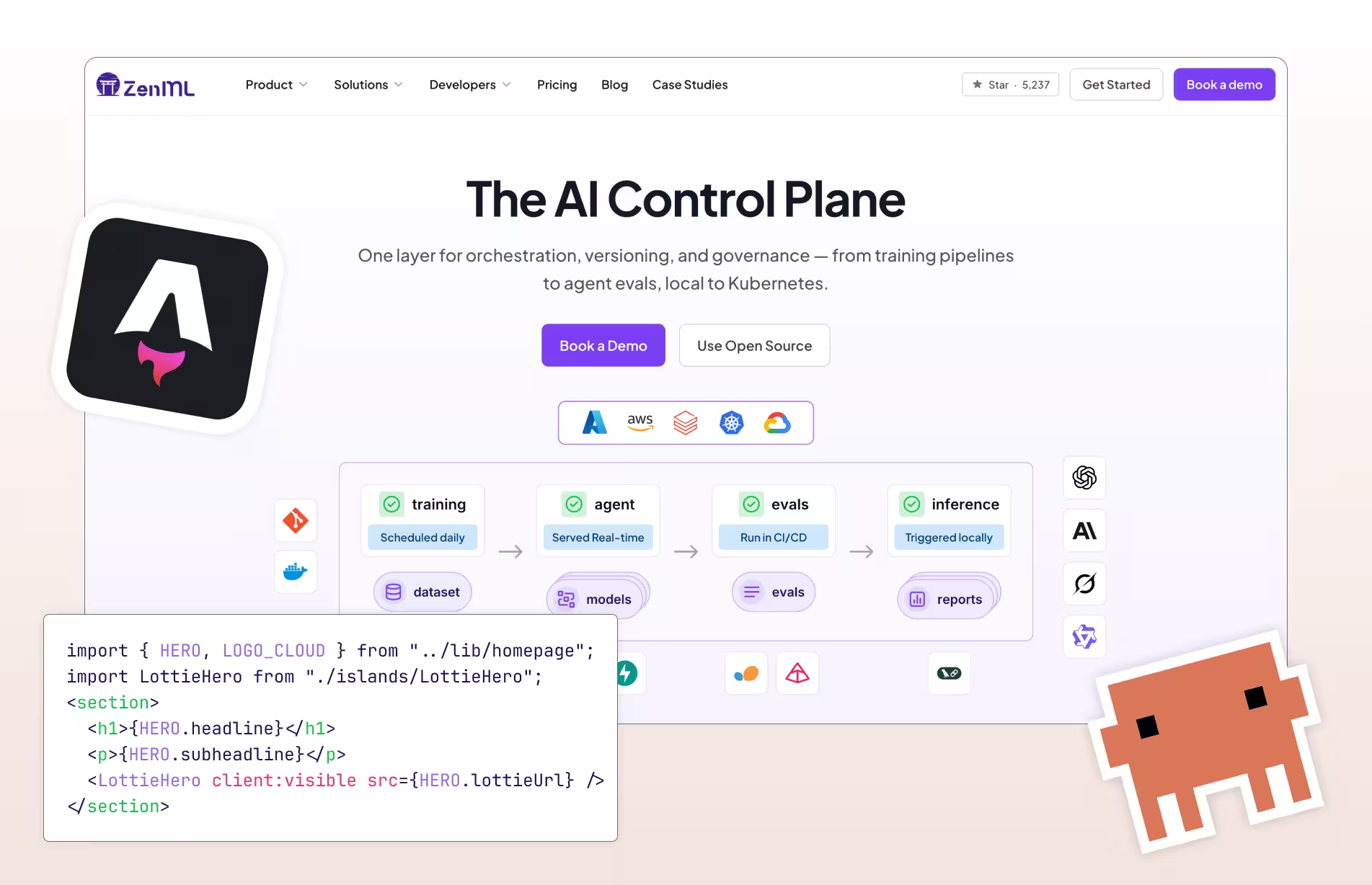

Open source MLOps stack

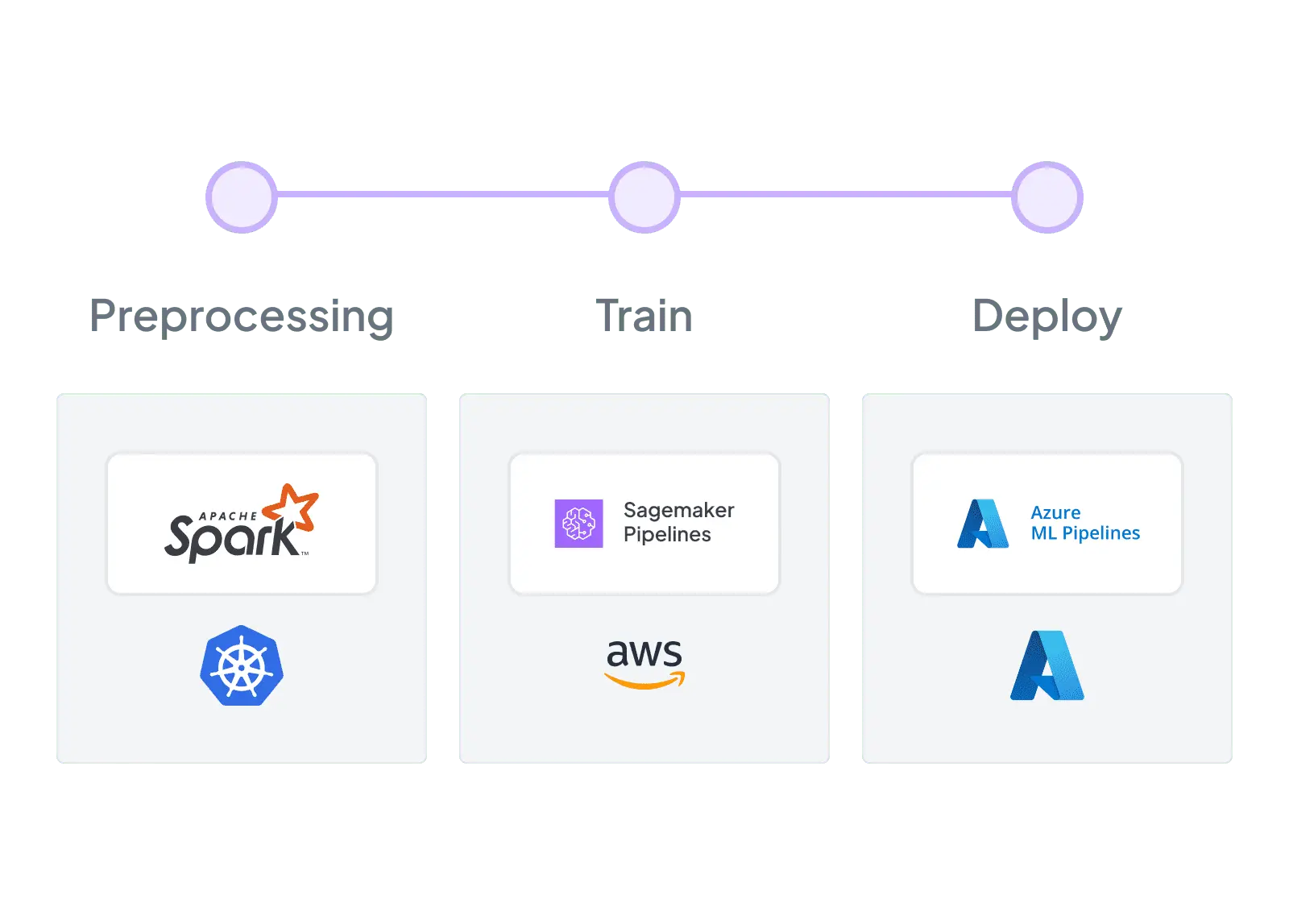

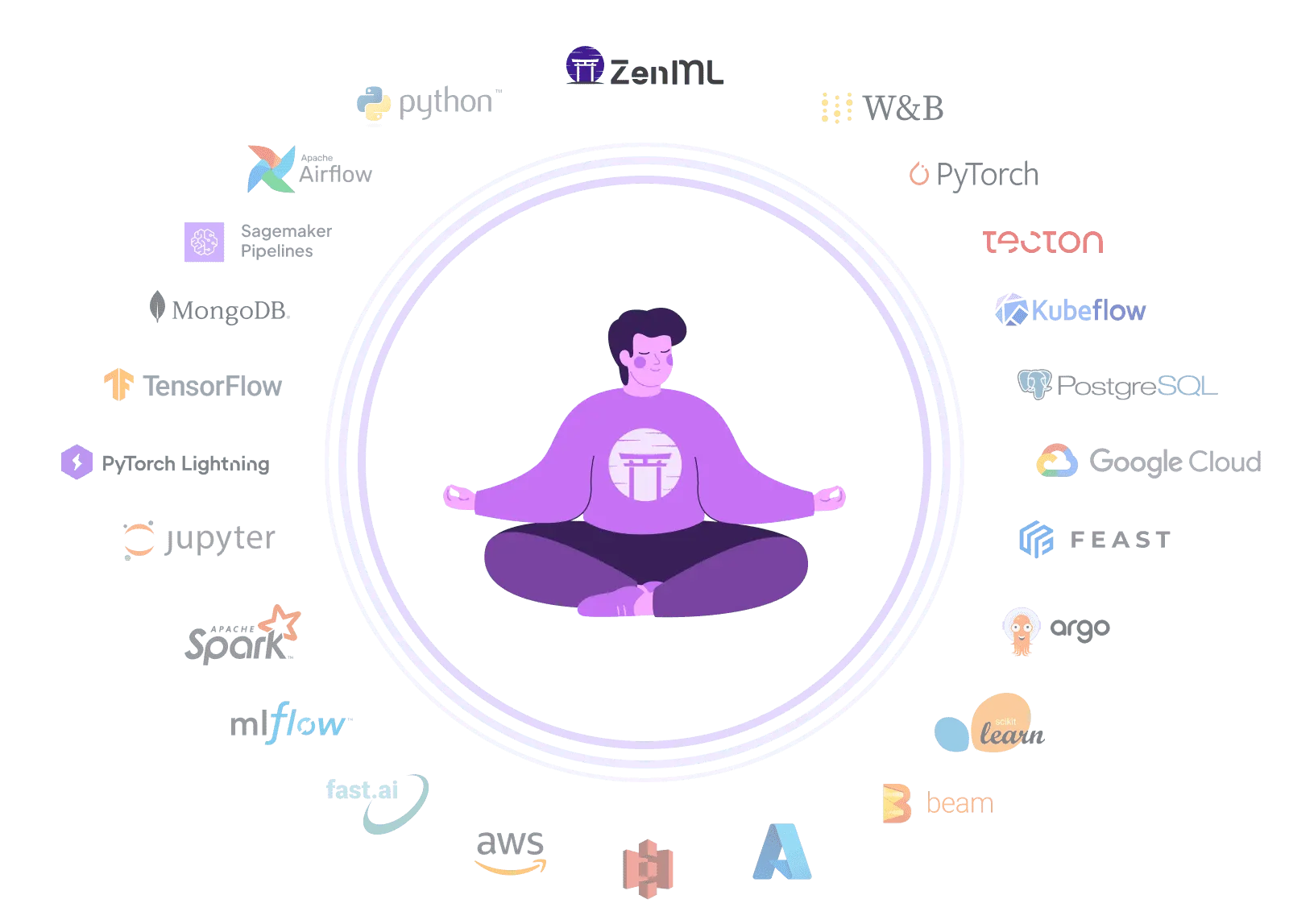

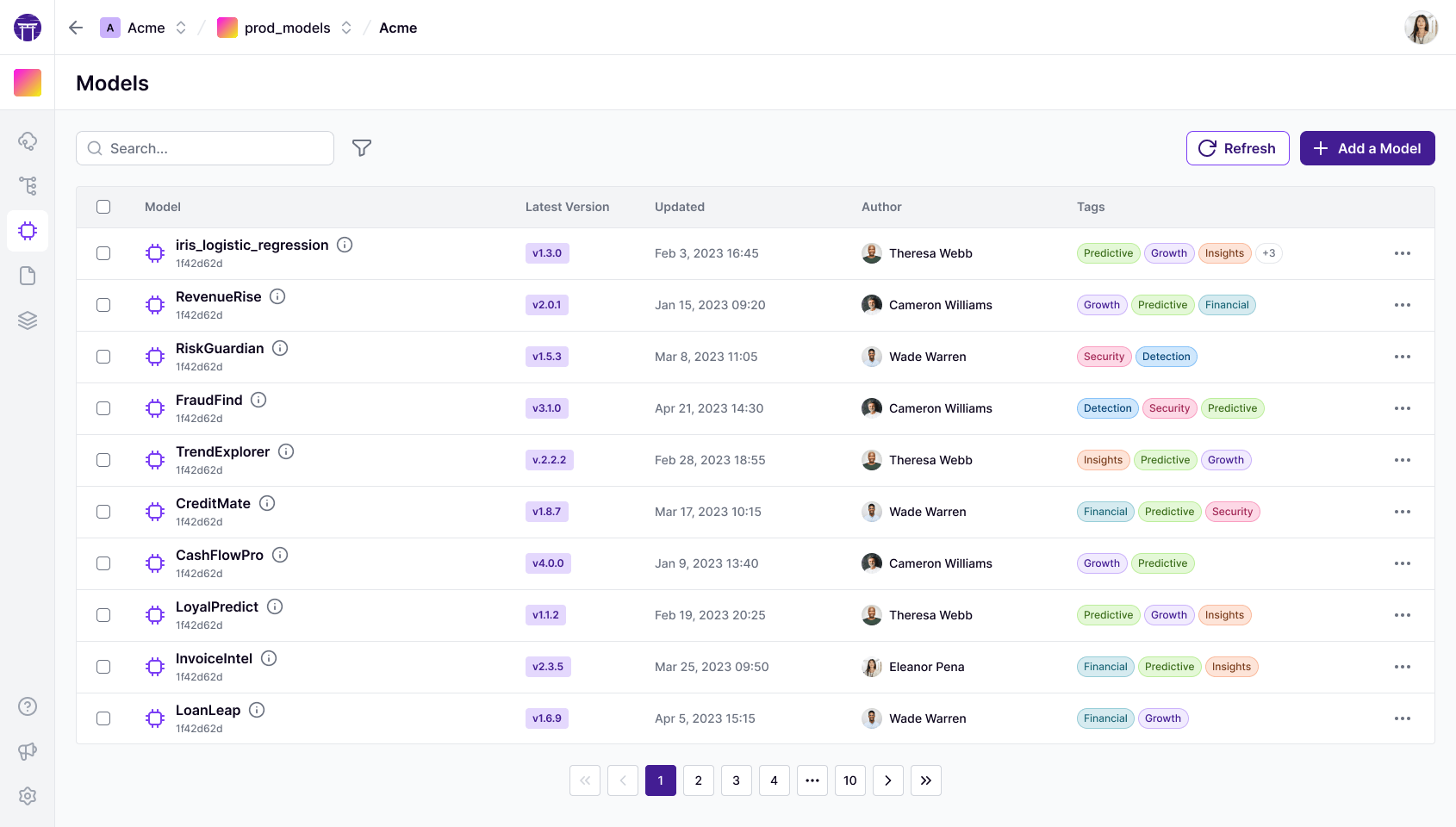

ZenML offers a flexible, open-source alternative to Valohai for ML pipeline orchestration. Unlike Valohai's closed-source, all-in-one platform, ZenML seamlessly integrates with your existing infrastructure. Enjoy ZenML's intuitive Python-based SDK with decorators, while avoiding vendor lock-in. Accelerate your ML initiatives with ZenML's adaptable framework, allowing you to choose preferred tools for datasets, hyperparameter tuning, and distributed processing.