Ship ML pipelines with confidence

Predictable, transparent pricing that scales with value.

Starter

For small teams

500 pipeline runs

1 project

1 snapshot

- Model Control Plane

- Artifact Control Plane

- 1 workspace

- Unlimited team members

- Basic support

Growth

For growing teams

2,000 pipeline runs

3 projects

5 snapshots

Everything in Starter, plus:

- Advanced Native Scheduling

- Webhooks & Triggers

- Priority support

Scale

For scaling teams

5,000 pipeline runs

10 projects

20 snapshots

Everything in Growth, plus:

- Codespaces (Remote IDE)

- Priority support

Enterprise

For organizations

Unlimited pipeline runs

Unlimited projects

Unlimited snapshots

Everything in Scale, plus:

- SSO (SAML/OIDC)

- RBAC (Custom Roles)

- Audit Logs

- Regional Deployment

- On-prem / Hybrid

- SOC2 & GDPR

- Professional Services

- Dedicated support + SLA

Compare Plans

| Plans | Starter | Growth | Scale | Enterprise |

|---|---|---|---|---|

| Feature | ||||

| Price | $399/mo | $999/mo | $2,499/mo | Custom |

| Pipeline Runs/mo | 500 | 2,000 | 5,000 | Unlimited |

| Projects | 1 | 3 | 10 | Unlimited |

| Snapshots ↗ | 1 | 5 | 20 | Unlimited |

| Workspaces | 1 | 1 | 1 | Custom |

| Team members | Unlimited | Unlimited | Unlimited | Unlimited |

| Pro Platform Features | ||||

| Model Control Plane | ||||

| Artifact Control Plane | ||||

| RBAC (Standard Roles) | ||||

| Advanced Features | ||||

| Advanced Native Scheduling | Coming Soon | |||

| Webhooks & Triggers | Coming Soon | |||

| Resource Management & Queueing | Coming Soon | |||

| Codespaces (Remote IDE) | Coming Soon | |||

| Enterprise Features | ||||

| SSO (SAML/OIDC) | ||||

| RBAC (Custom Roles) | ||||

| Audit Logs | ||||

| Regional Deployment | ||||

| On-prem / Hybrid | ||||

| SOC2 & GDPR ↗ | ||||

| Professional Services | Workshops & Architecture Reviews | |||

| Support | ||||

| Support Level | Basic | Priority | Priority | Dedicated + SLA |

| Talk to Sales | Talk to Sales | Talk to Sales | Talk to Sales | |

Are you startup or academic?

Apply for a special price to access ZenML Pro features for early-stage companies building ML-powered products, universities, research institutions, and educational use cases.

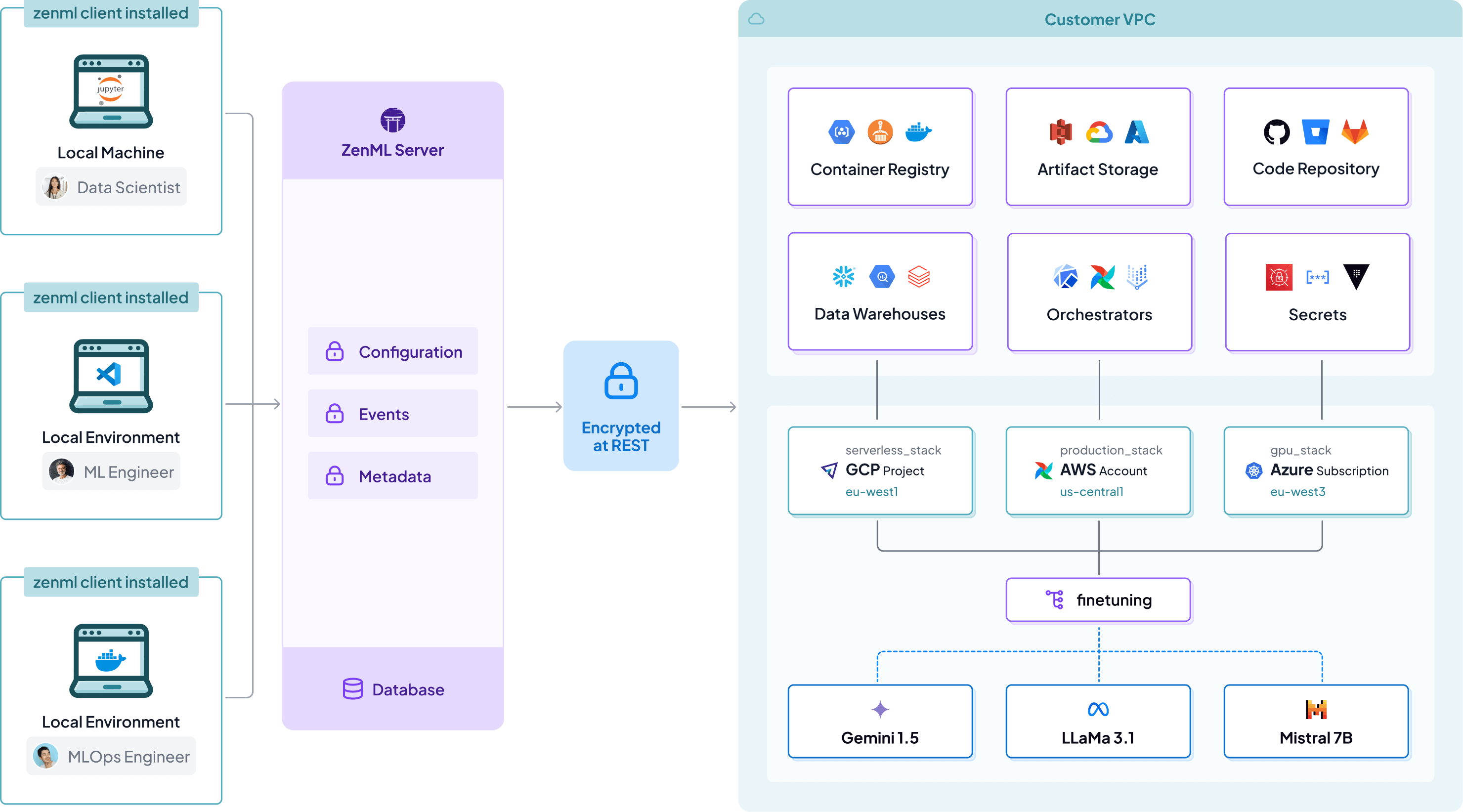

No compliance headaches

Your VPC, your data

ZenML is a metadata layer on top of your existing infrastructure, meaning all data and compute stays on your side.

ZenML is SOC2 and ISO 27001 Compliant

We Take Security Seriously

ZenML is SOC2 and ISO 27001 compliant, validating our adherence to industry-leading standards for data security, availability, and confidentiality in our ongoing commitment to protecting your ML workflows and data.

Support

Frequently asked questions

Everything you need to know about the product.

What happens if I exceed my plan’s limits?

Can I self-host ZenML?

How do Run Template Triggers work?

What’s the difference between the managed plans and the open source version?

What kind of support is included in each plan?

Trusted by 1,000s of members of top companies

Join the ZenML Community and start improving your MLOps

1,000,000

pipelines run in ZenML

100,000

pipelines run last month

21,000

stacks registered last 12 months

200,000

models trained last 12 months

"ZenML offers the capability to build end-to-end ML workflows that seamlessly integrate with various components of the ML stack, such as different providers, data stores, and orchestrators."

Harold Giménez

SVP R&D at HashiCorp

Start deploying reproducible AI workflows today

Enterprise-grade MLOps platform trusted by thousands of companies in production.