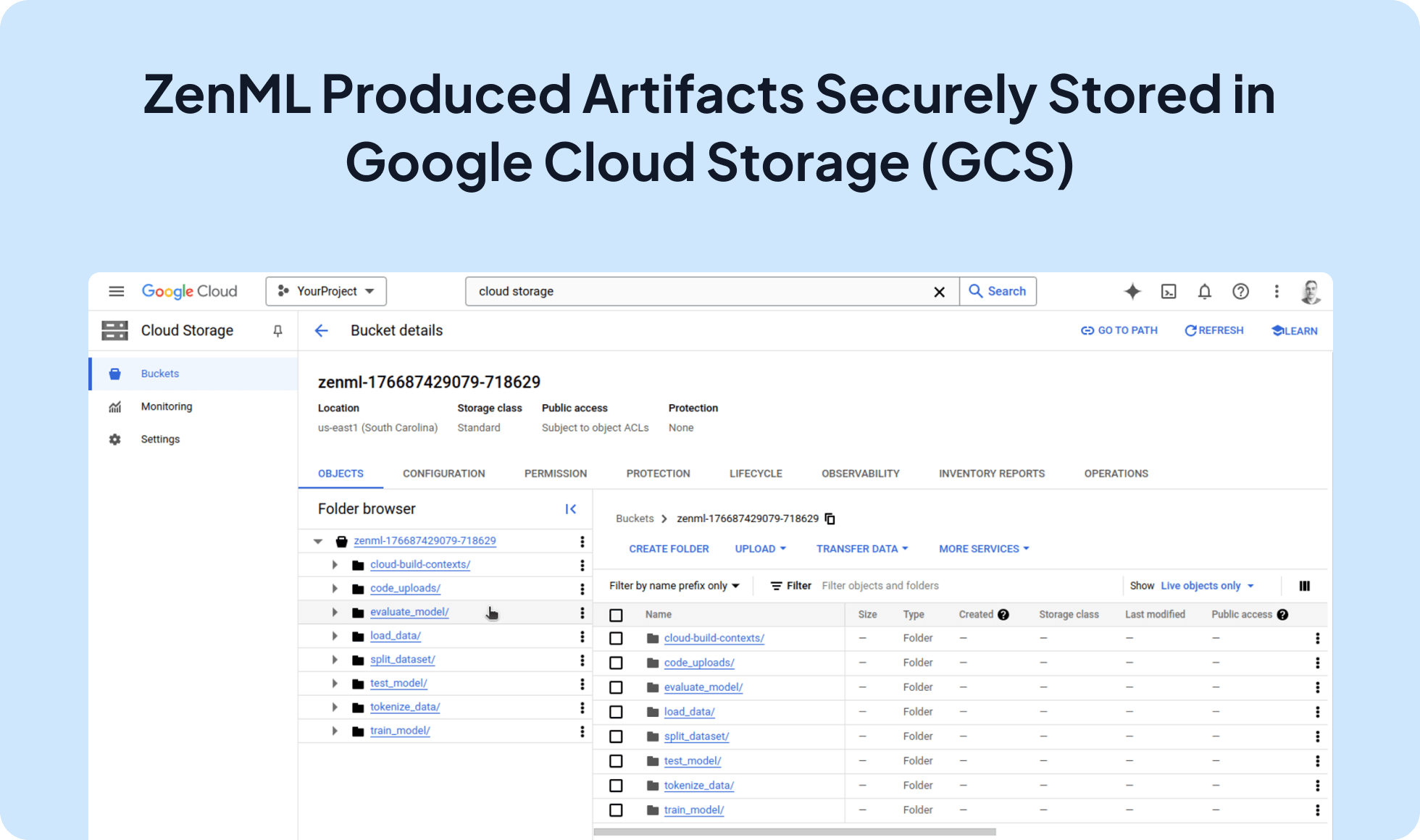

Seamlessly Store your pipeline step outputs with Google Cloud Storage (GCS)

Integrate Google Cloud Storage (GCS) with ZenML to leverage a scalable and reliable artifact store for your ML workflows. This integration enables you to store and share pipeline artifacts, making it ideal for collaboration, remote execution, and production-grade MLOps.

zenml integration install gcp

zenml stack set ...from typing_extensions import Annotated

from zenml import pipeline, step

from zenml.client import Client

@step

def my_step(input_dict: dict) -> Annotated[dict, "dict_from_aws_cloud_storage"]:

output_dict = input_dict.copy()

output_dict["message"] = "Store this in cloud storage"

return output_dict

@pipeline

def my_pipeline(input_dict: dict):

my_step(input_dict)

if __name__ == "__main__":

input_data = {"key": "value"}

my_pipeline(input_data)

# access the remote file from local code

data = Client().get_artifact_version(name_id_or_prefix="dict_from_aws_cloud_storage").load()

print(

"The artifact value you saved in the `my_pipeline` run is:\n "

f"{data}"

)Expand your ML pipelines with more than 50 ZenML Integrations