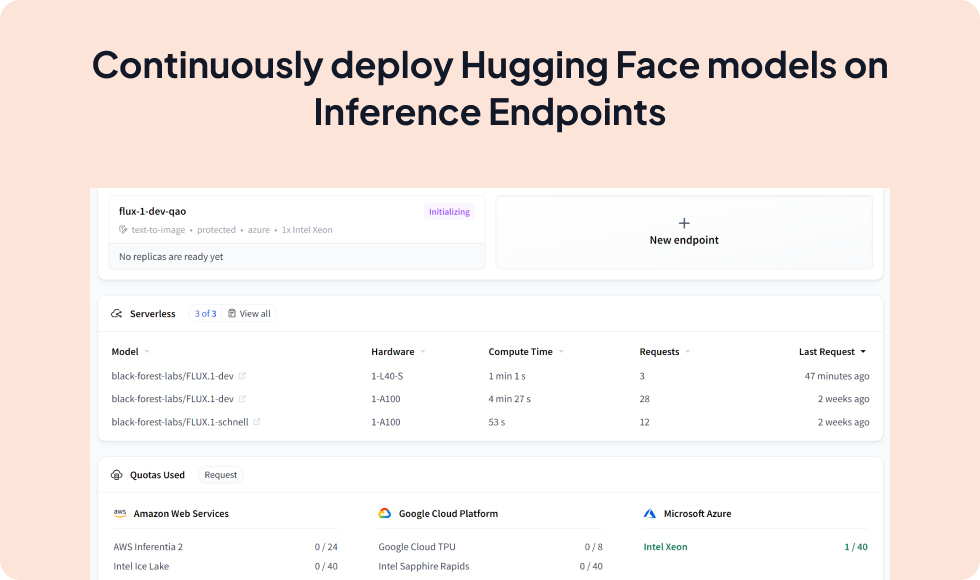

Effortlessly deploy Hugging Face models to production with ZenML

Integrate Hugging Face Inference Endpoints with ZenML to streamline the deployment of transformers, sentence-transformers, and diffusers models. This integration allows you to leverage Hugging Face's secure, scalable infrastructure for hosting models, while managing the deployment process within your ZenML pipelines.

from zenml.integrations.huggingface.steps import huggingface_model_deployer_step

from zenml.integrations.huggingface.services.huggingface_deployment import HuggingFaceDeploymentService

from zenml.integrations.huggingface.services import HuggingFaceServiceConfig

@step

def predictor(

service: HuggingFaceDeploymentService,

) -> Annotated[str, "predictions"]:

# Run a inference request against a prediction service

data = load_live_data()

prediction = service.predict(data)

return prediction

@pipeline

def deploy_and_infer():

service_config = HuggingFaceServiceConfig(model_name=model_name)

service = huggingface_model_deployer_step(

model_name="text-classification-model",

accelerator="gpu",

hf_repository="myorg/text-classifier",

task="text-classification"

)

predictor(service)

Expand your ML pipelines with more than 50 ZenML Integrations