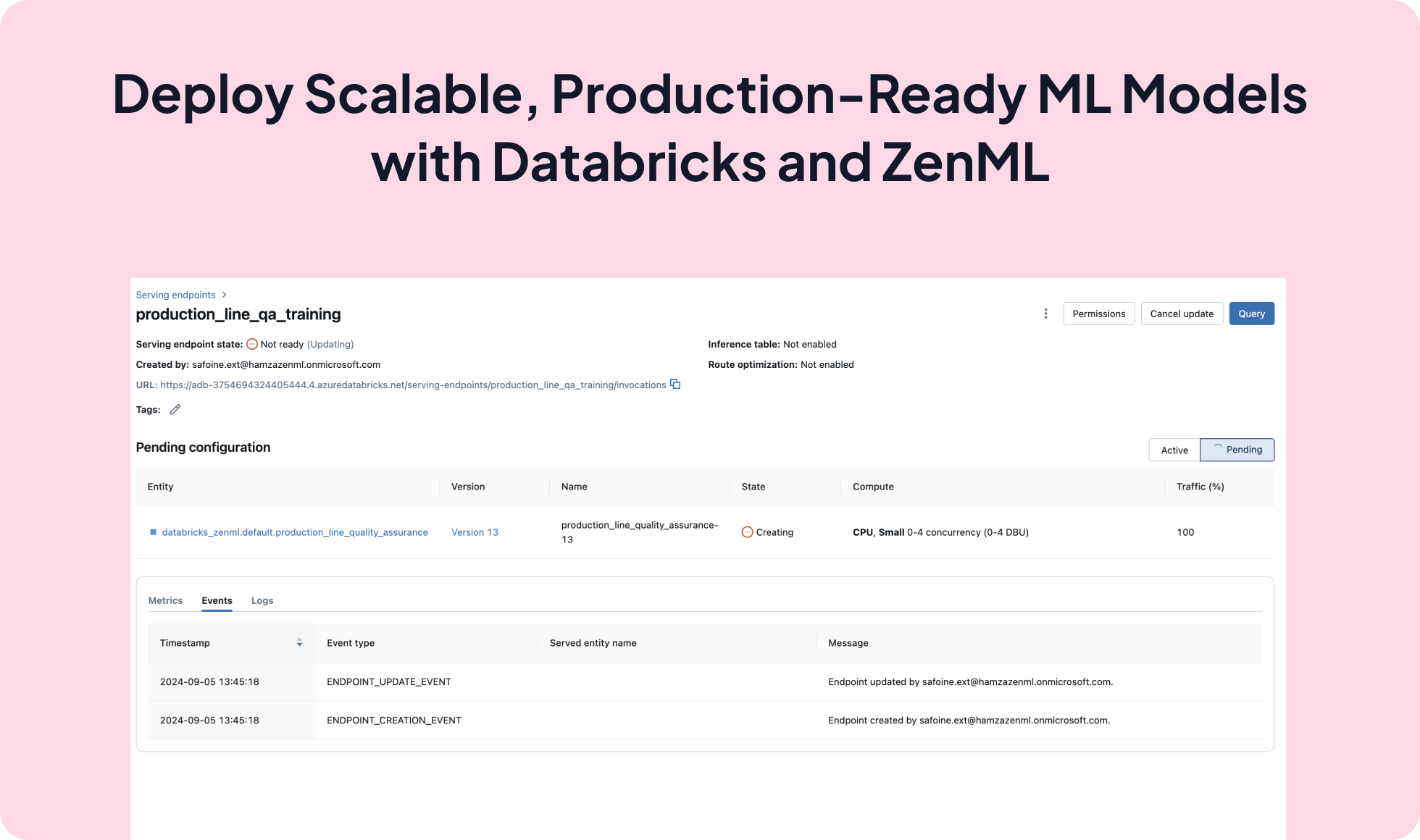

Deploy Scalable, Production-Ready ML Models with Databricks and ZenML

Integrate Databricks Model Serving with ZenML to deploy and serve AI models effortlessly. This integration provides a unified interface to deploy, govern, and query models, leveraging Databricks' managed infrastructure for scalability and enterprise security.

from zenml.integrations.databricks.steps.databricks_deployer import databricks_model_deployer_step

@step(enable_cache=False)

def deployment_deploy() -> (

Annotated[

Optional[DatabricksDeploymentService],

ArtifactConfig(

name="databricks_deployment", is_deployment_artifact=True

),

]

):

# deploy predictor service

zenml_client = Client()

model_deployer = zenml_client.active_stack.model_deployer

databricks_deployment_config = DatabricksDeploymentConfig(

model_name=model.name,

model_version=model.run_metadata["model_registry_version"].value,

workload_size="Small",

workload_type="CPU",

scale_to_zero_enabled=True,

endpoint_secret_name="databricks_token",

)

deployment_service = model_deployer.deploy_model(

config=databricks_deployment_config,

service_type=DatabricksDeploymentService.SERVICE_TYPE,

timeout=1200,

)

logger.info(

f"The deployed service info: {model_deployer.get_model_server_info(deployment_service)}"

)

return deployment_service

@pipeline(on_failure=notify_on_failure)

def databricks_deploy_pipeline():

deployment_deploy()

notify_on_success(after=["deployment_deploy"])

databricks_deploy_pipeline().run()

Expand your ML pipelines with more than 50 ZenML Integrations