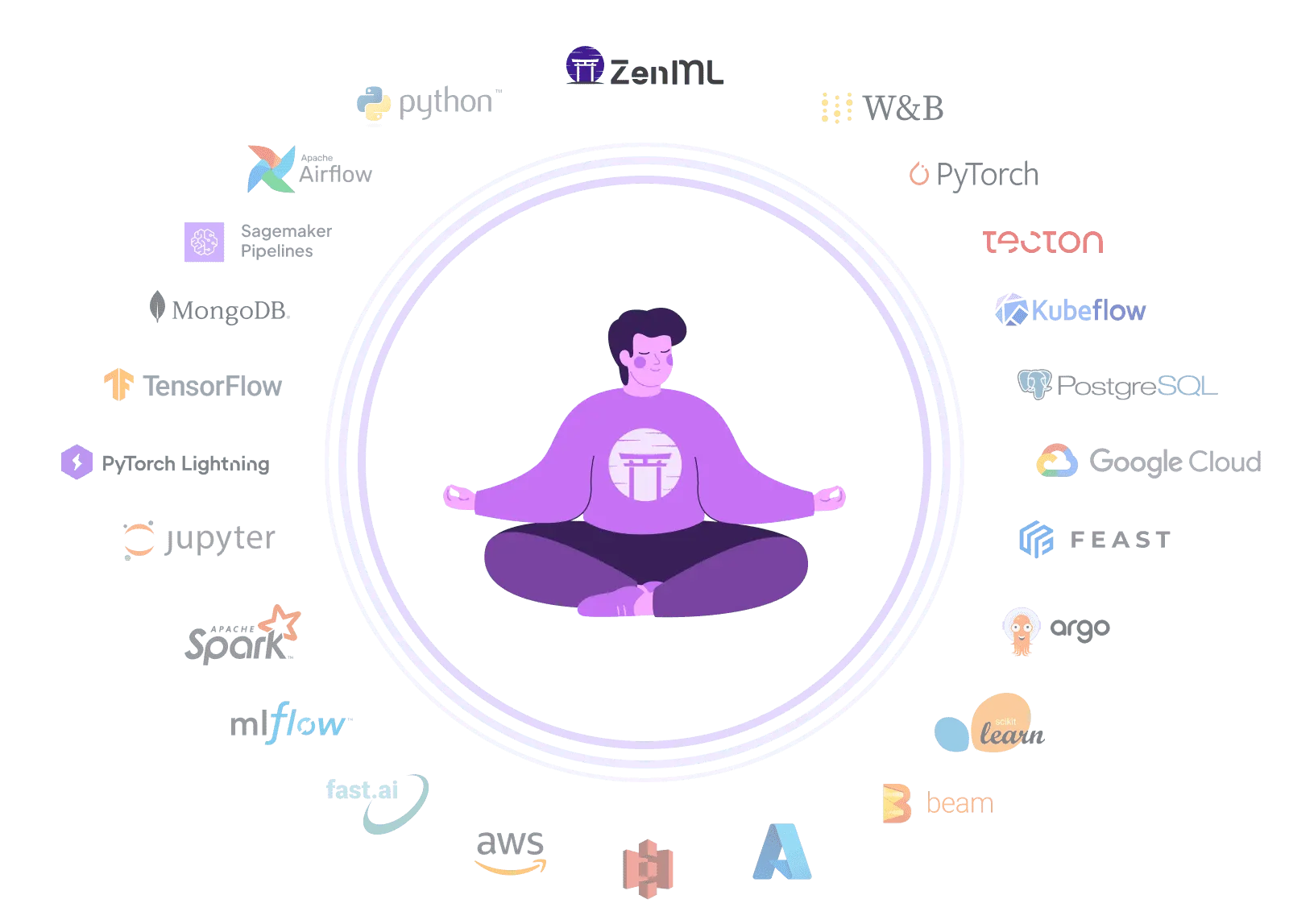

| Workflow Orchestration | Provides portable, code-defined pipelines that run on any orchestrator (Airflow, Kubeflow, local, etc.) via composable stacks | Offers Domino Flows (built on Flyte) with DAG orchestration, lineage tracking, and a platform monitoring UI |

| Integration Flexibility | Designed to integrate with any ML tool — swap orchestrators, trackers, artifact stores, and deployers without changing pipeline code | Broad enterprise integrations (Snowflake, Spark, MLflow, SageMaker), but consumed through Domino's platform abstraction |

| Vendor Lock-In | Open-source and vendor-neutral — pipelines are pure Python code portable across any infrastructure | Proprietary platform with moderate lock-in; uses Flyte and MLflow internally but ties workflows to Domino's control plane |

| Setup Complexity | Pip-installable, start locally with minimal infrastructure — scale by connecting to cloud compute when ready | Enterprise deployment spectrum from SaaS to on-prem/hybrid, requiring Platform Operator and Kubernetes infrastructure |

| Learning Curve | Familiar Python-based pipeline definitions with simple decorators; fewer platform concepts to learn | Cohesive UI lowers barrier for data scientists, but many platform concepts (Projects, Workspaces, Jobs, Flows, Governance) |

| Scalability | Scales via underlying orchestrator and infrastructure — leverage Kubernetes, cloud services, or distributed compute | Enterprise-grade scaling with hardware tiers, distributed clusters (Spark/Ray/Dask), and multi-region data planes |

| Cost Model | Open-source core is free — pay only for infrastructure. Optional managed service for enterprise features | Enterprise subscription pricing geared toward large organizations, with deployment options ranging from SaaS to on-prem |

| Collaborative Development | Collaboration through code sharing, version control, and the ZenML dashboard for pipeline visibility | Strong collaboration with shared Projects, interactive Workspaces, project templates, and model cards |

| ML Framework Support | Framework-agnostic — use any Python ML library in pipeline steps with automatic artifact serialization | Containerized environments support any framework; validated for scikit-learn, PyTorch, Spark, Ray, and more |

| Model Monitoring & Drift Detection | Integrates with monitoring tools like Evidently and Great Expectations as pipeline steps for customizable drift detection | Built-in monitoring with statistical tests (KL divergence, PSI, Chi-square), scheduled checks, and alerting |

| Governance & Access Control | Pipeline-level lineage, artifact tracking, RBAC, and model control plane for audit trails and approval workflows | Enterprise-grade governance with policy management, automated evidence collection, unified audit trail, and compliance certifications |

| Experiment Tracking | Integrates with any experiment tracker (MLflow, W&B, etc.) as part of your composable stack | MLflow-backed experiment tracking with autologging and manual logging, integrated into the platform UI |

| Reproducibility | Auto-versioned code, data, and artifacts for every pipeline run — portable reproducibility across any infrastructure | Strong reproducibility via environment snapshots, Flows lineage/versioning, and Git-based projects |

| Auto Retraining Triggers | Supports scheduled pipelines and event-driven triggers that can initiate retraining based on drift detection or performance thresholds | Scheduled Jobs and Flows with API-driven triggers; requires wiring monitoring alerts to job/flow execution |