| Workflow Orchestration | Purpose-built ML pipeline orchestration with pluggable backends — Airflow, Kubeflow, Kubernetes, and more | Visual workflow execution on Alteryx Engine or Server — designed for analytics automation, not ML pipeline lifecycle |

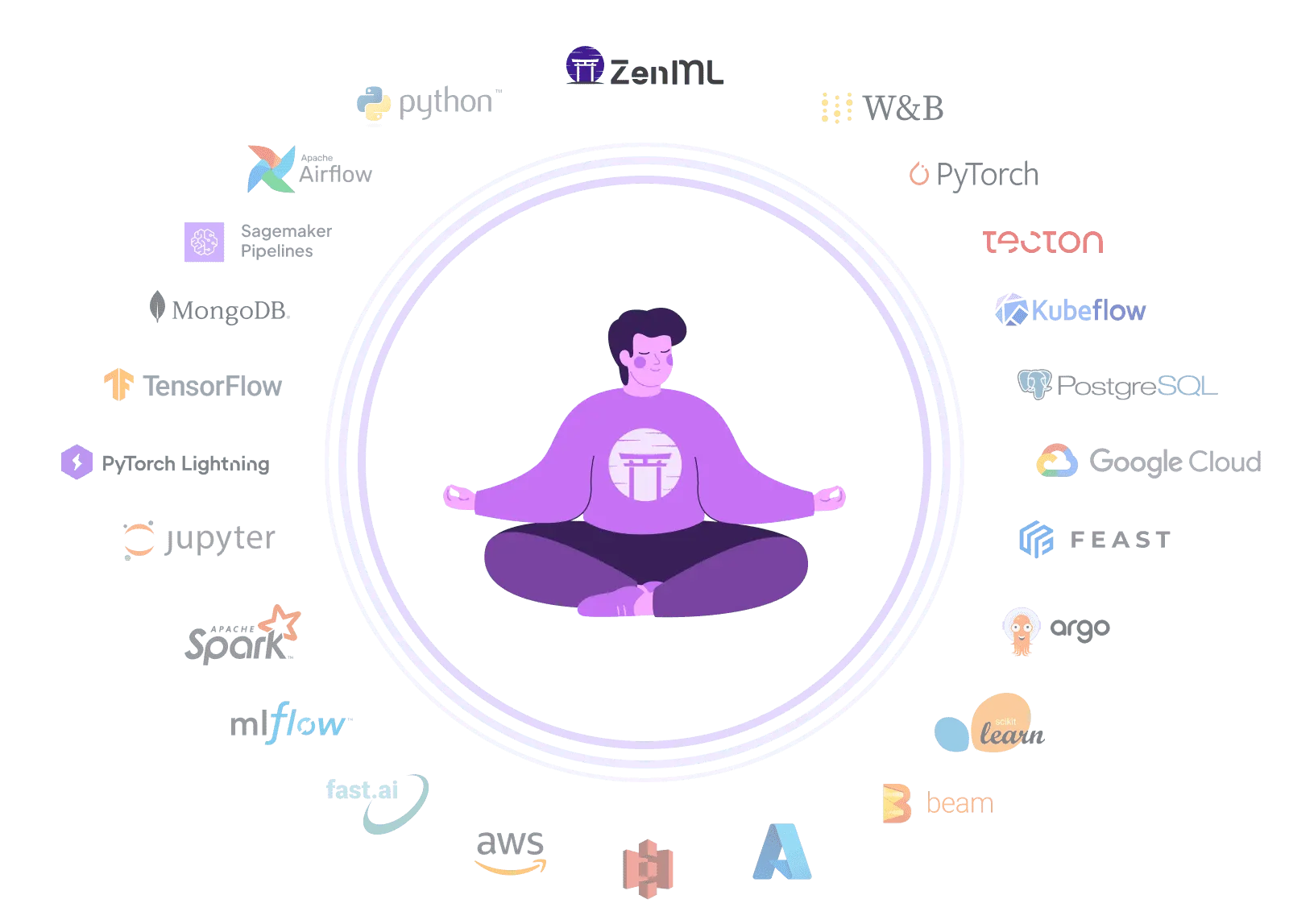

| Integration Flexibility | Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes | Strong data source connectors (100+) but limited MLOps ecosystem integration — ML tools require custom Python/API code |

| Vendor Lock-In | Open-source Python pipelines run anywhere — switch clouds, orchestrators, or tools without rewriting code | Proprietary .yxmd workflow format locked to the Alteryx engine — workflows cannot run outside the Alteryx ecosystem |

| Setup Complexity | pip install zenml — start building pipelines in minutes with zero infrastructure, scale when ready | Windows desktop install plus Server administration (controller/worker architecture, MongoDB, licensing) for enterprise deployment |

| Learning Curve | Python-native API with decorators — familiar to any ML engineer or data scientist who writes Python | Exceptionally approachable drag-and-drop interface designed for business analysts and citizen data scientists |

| Scalability | Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling | AMP engine with multi-threading, in-database pushdown to Snowflake/Databricks, and Server worker scaling |

| Cost Model | Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features | Per-seat licensing across Starter, Professional, and Enterprise tiers — pricing varies by edition and deployment model |

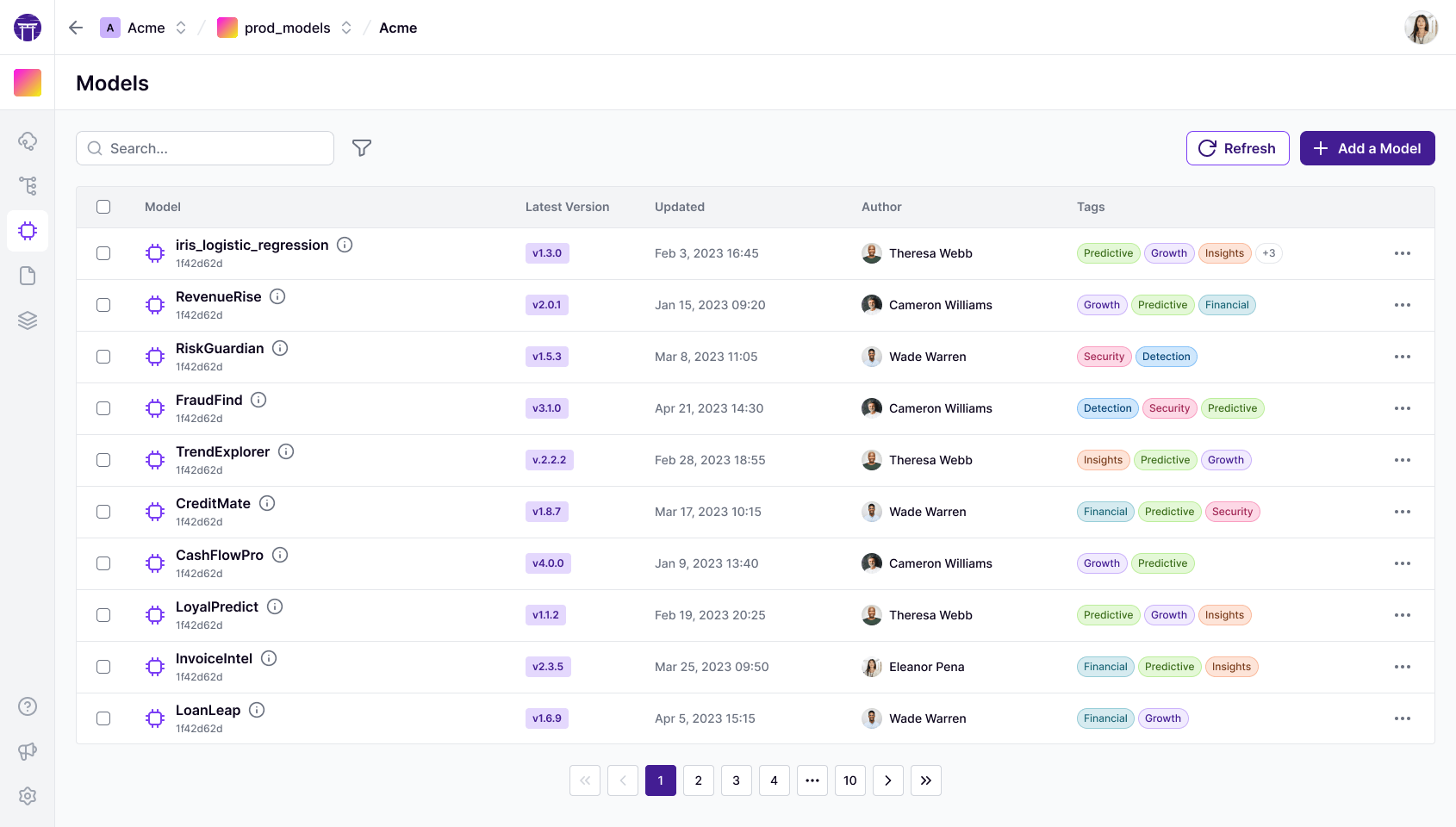

| Collaboration | Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards | Server Gallery for sharing workflows, collections, version history, and analytic apps with role-based access control |

| ML Frameworks | Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking | R-based predictive tools plus Intelligence Suite for AutoML — Python tool enables scikit-learn and other frameworks inside workflows |

| Monitoring | Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting | No native model monitoring or drift detection — Plans offers data health alerting but ML model performance tracking is absent |

| Governance | ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure | Enterprise-grade governance with ISO 27001, SOC 2, RBAC, SSO, audit logs, and new lineage integrations with Atlan and Collibra |

| Experiment Tracking | Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison | No built-in experiment tracking — workflow version history exists on Server but structured ML experiment comparison is absent |

| Reproducibility | Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs | Deterministic workflow files are repeatable — though Python/R environment drift across machines can affect consistency |

| Auto-Retraining | Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows | Server scheduling and API-triggered workflow runs enable periodic retraining — but no ML-signal-based automatic triggers |