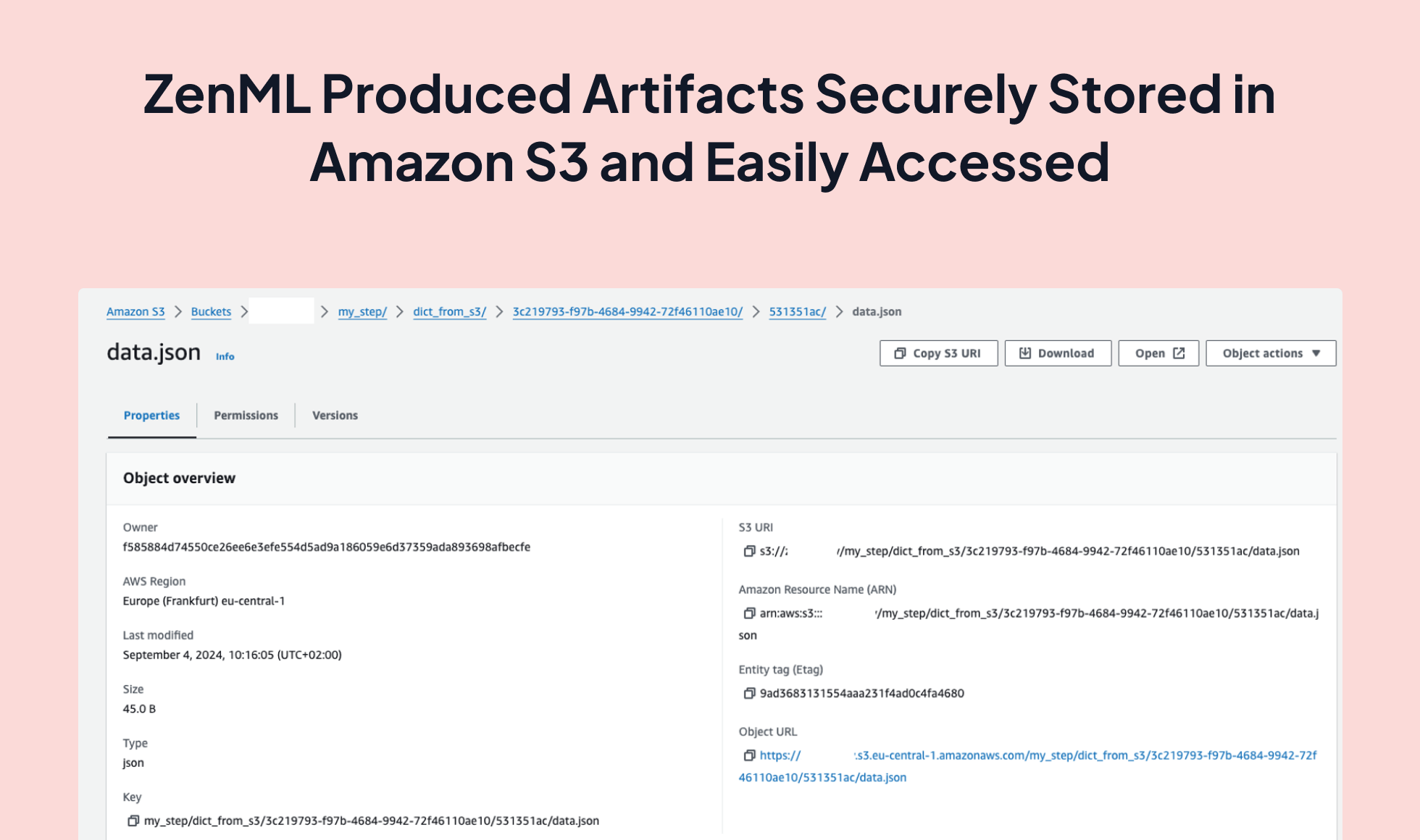

Elevate your MLOps game by integrating Amazon S3 with ZenML for efficient and reliable artifact storage. This powerful combination allows you to store and manage your pipeline artifacts in the cloud, ensuring scalability, high availability, and seamless collaboration for your machine learning projects.

# Step 1: Install the AWS integration

>>> zenml integration install s3

# Step 2: Register the S3 artifact store

>>> zenml artifact-store register s3_store -f s3 --path="s3://your-bucket-name"

# Step 3: [Optional] Connect the S3 artifact store to a Service Connector

>>> zenml artifact-store connect s3_store -i

# Step 4: Update your stack to use the S3 artifact store

>>> zenml stack update -a s3_store

# Step 5: Run the pipeline using the S3 artifact store

>>> python3 my_pipeline.py

Initiating a new run for the pipeline: my_pipeline.

Executing a new run.

Using user: user1

Using stack: remote_stack

orchestrator: default

artifact_store: s3_store

You can visualize your pipeline runs in the ZenML Dashboard. In order to try it locally, please run zenml up.

Step my_step has started.

Step my_step has finished in 0.078s.

Step my_step completed successfully.

Pipeline run has finished in 0.112s.

The artifact value you saved in the `my_pipeline` run is:

{'key': 'value', 'message': 'Hello from S3!'}

from typing_extensions import Annotated

from zenml import pipeline, step

from zenml.client import Client

@step

def my_step(input_dict: dict) -> Annotated[dict, "dict_from_s3"]:

output_dict = input_dict.copy()

output_dict["message"] = "Hello from S3!"

return output_dict

@pipeline

def my_pipeline(input_dict: dict):

my_step(input_dict)

if __name__ == "__main__":

input_data = {"key": "value"}

my_pipeline(input_data)

print(

"The artifact value you saved in the `my_pipeline` run is:\n"

+ str(Client().get_artifact_version(name_id_or_prefix="dict_from_s3").load())

)my_pipeline.py

Expand your ML pipelines with more than 50 ZenML Integrations