| Workflow Orchestration | ZenML defines ML/AI workflows as pipelines (DAGs) of steps and executes them on configurable stacks, with artifacts and metadata tracked by default. | LangGraph natively orchestrates agent workflows as executable graphs with branching and cycles, optimized for stateful LLM/agent control flow. |

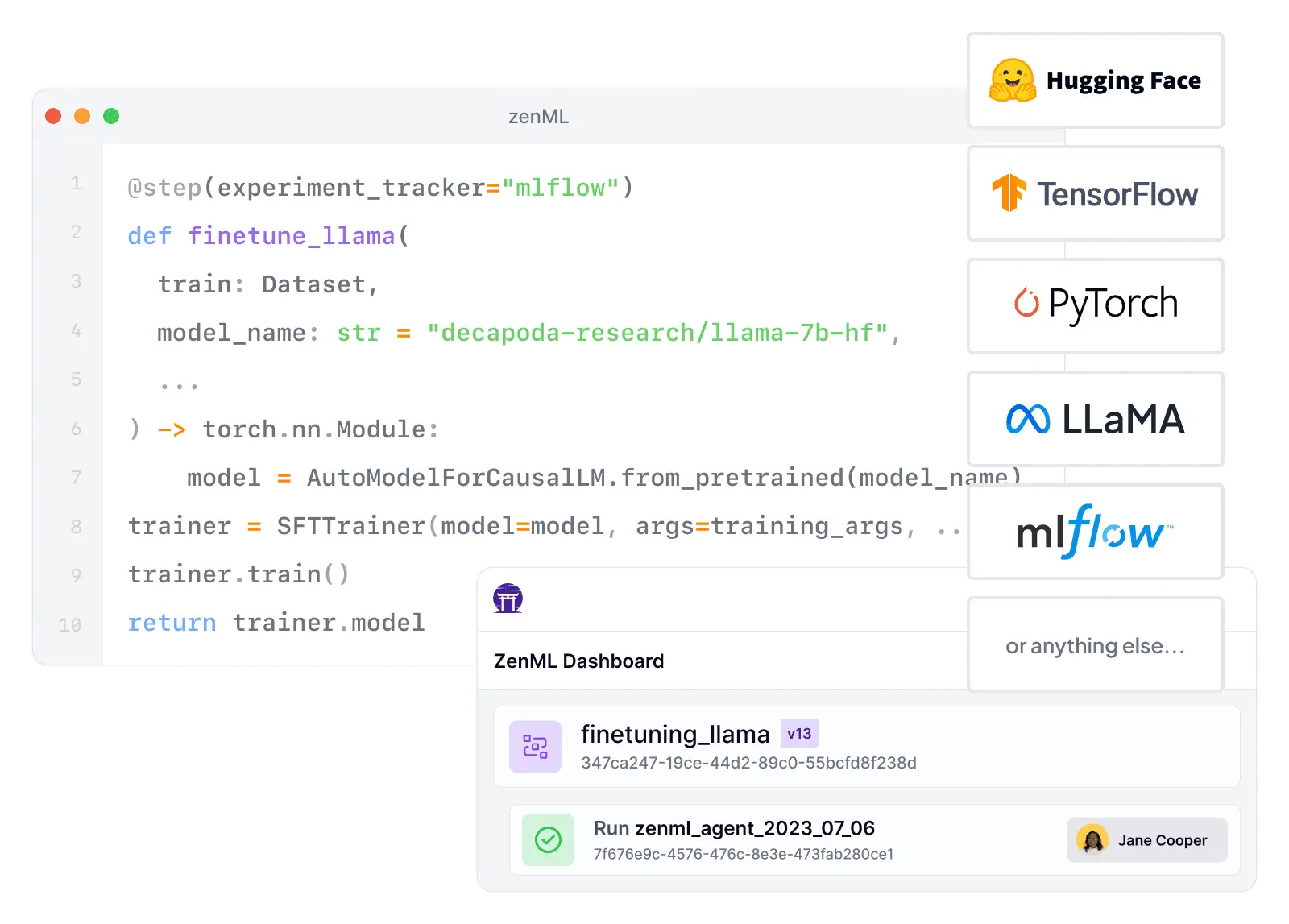

| Integration Flexibility | ZenML's stack architecture lets teams swap orchestrators, artifact stores, experiment trackers, and deployers without rewriting pipeline logic. | LangGraph integrates tightly with the LangChain ecosystem, but doesn't provide an MLOps-style plug-in stack for infrastructure components. |

| Vendor Lock-In | ZenML is cloud-agnostic by design: pipelines run on stacks you control, and you can move between infrastructures by swapping stack components. | LangGraph's core library is open-source (MIT) and runs anywhere Python runs; vendor coupling mainly appears when adopting LangSmith for managed operations. |

| Setup Complexity | ZenML can start locally and scale via stacks, but production setups require configuring orchestrators, artifact stores, and other components. | LangGraph's getting-started path is lightweight (pip install + define a graph), and the CLI can bootstrap local dev servers and Docker-based runs. |

| Learning Curve | ZenML maps closely to familiar ML concepts (steps, pipelines, artifacts), and its abstractions align with production ML workflow structure. | LangGraph's explicit state/graph model is powerful, but teams face a learning curve around state design, reducers, interrupts, and debugging cyclical flows. |

| Scalability | ZenML scales by delegating execution to orchestrators (e.g., Kubernetes-native options) and by externalizing artifacts and metadata into stack components. | LangGraph scales to production workloads when deployed with an agent server architecture (Postgres + Redis) or via LangSmith Deployment. |

| Cost Model | ZenML is free in open source, with paid plans pricing around pipeline-run volume and team governance features. | LangGraph OSS is free; LangSmith adds transparent per-seat pricing plus usage-based charges for deployments and traces. |

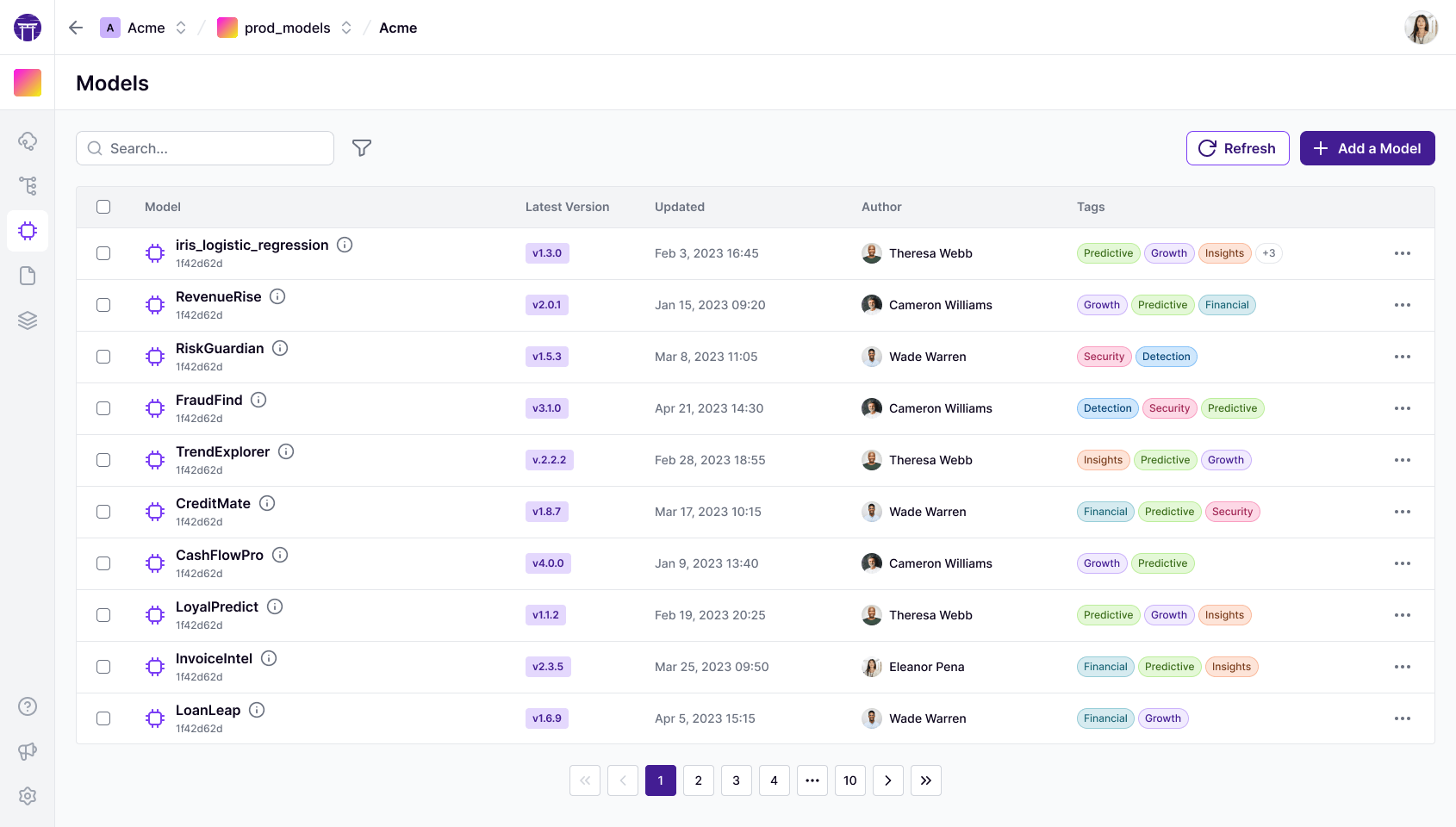

| Collaboration | ZenML Pro adds projects/workspaces, RBAC, and UI control planes for models and artifacts to enable team collaboration on production workflows. | LangGraph collaboration is strongest when paired with LangSmith (workspaces, team features, deployment management); the OSS library alone is single-app code. |

| ML Frameworks | ZenML is designed to wrap ML training/evaluation/inference across frameworks via steps, artifacts, and stack integrations. | LangGraph is framework-agnostic at the code level but optimized for LLM/agent workflows rather than deep integration with ML training frameworks. |

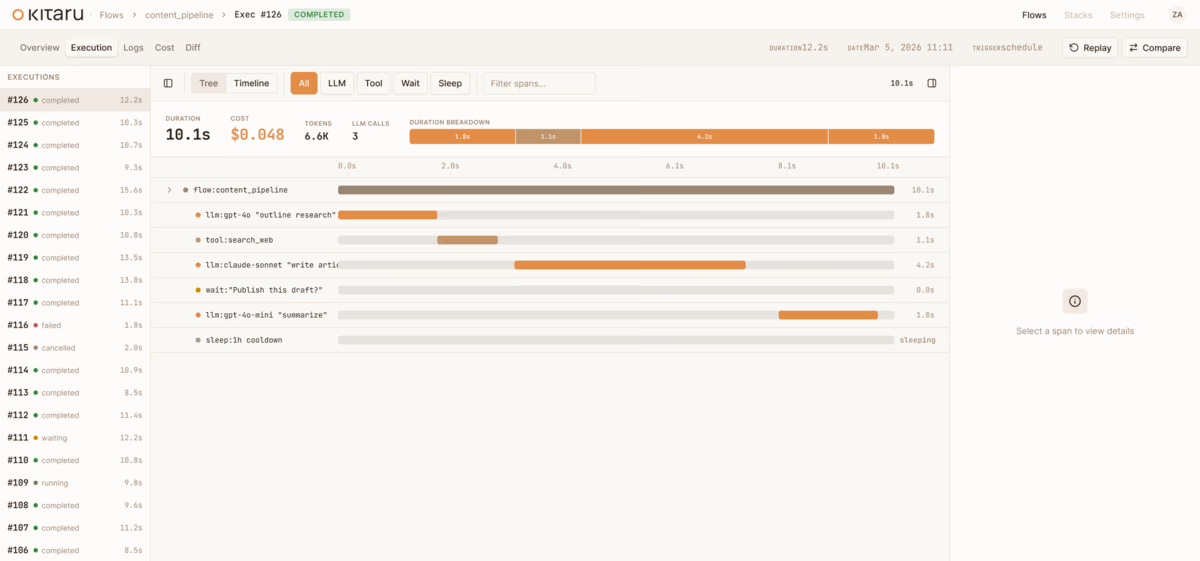

| Monitoring | ZenML tracks pipeline/step metadata and artifacts to support operational debugging, governance, and integration with monitoring tooling. | LangGraph pairs with LangSmith for deep tracing and debugging of agent execution, with visual trace inspection and replay capabilities. |

| Governance | ZenML Pro plans include RBAC/SSO and enterprise features (custom roles, audit logs) aligned with governance requirements. | Governance controls (SSO/RBAC, enterprise support) are delivered through LangSmith Enterprise rather than the LangGraph OSS library. |

| Experiment Tracking | ZenML treats pipeline runs as experiments and supports experiment tracker components to log metrics, parameters, and model metadata. | LangGraph captures execution traces and state trajectories, but is not an experiment tracking system for ML training runs and hyperparameter sweeps. |

| Reproducibility | ZenML automatically tracks artifact lineage (inputs/outputs, producing steps, dependencies) and uses that to enable reproducibility and caching. | LangGraph supports checkpointing and replay for agent state, but doesn't natively version datasets/models/environments the way an MLOps platform does. |

| Auto-Retraining | ZenML is built for scheduled and trigger-based pipelines that can retrain models, validate data, and promote artifacts through environments. | LangGraph is not designed as an auto-retraining or ML CI/CD system; it focuses on orchestrating agent behaviors and stateful execution. |