| Workflow Orchestration |

ML-native pipelines with portable execution via stacks, while still supporting Kubernetes-based orchestration when needed

|

Kubernetes-native workflow engine with mature DAG/steps execution, retries, scheduling, and strong operational controls on K8s

|

| Integration Flexibility |

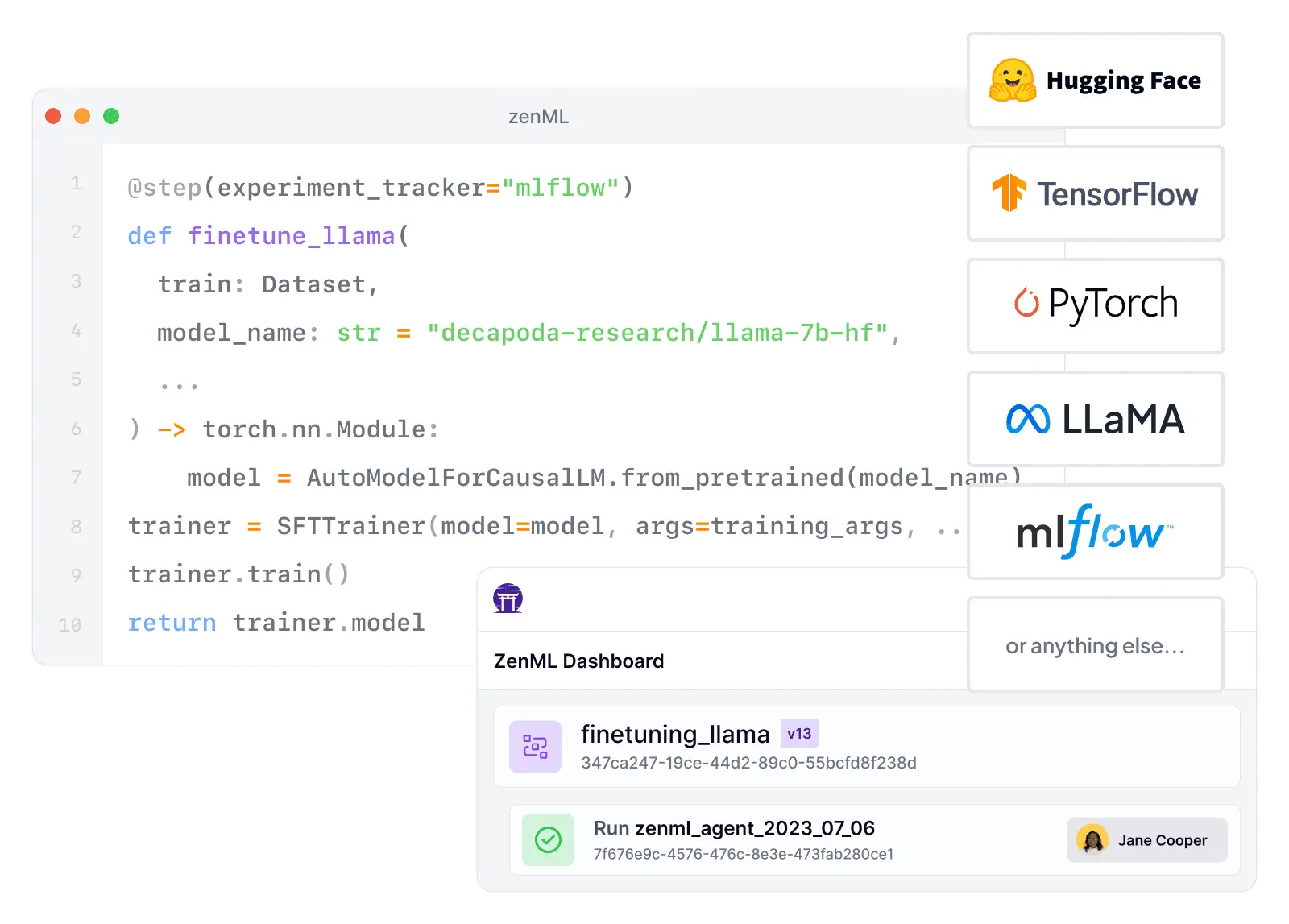

Composable stack with 50+ MLOps integrations — swap orchestrators, trackers, and deployers without code changes

|

Runs virtually any containerized tool, but integrations are DIY — teams wire credentials, storage, and conventions manually

|

| Vendor Lock-In |

Cloud-agnostic by design — stacks make it easy to switch infrastructure providers and tools as needs change

|

Runs on any Kubernetes cluster and is CNCF-governed open source — lock-in is primarily to Kubernetes itself, not a specific cloud

|

| Setup Complexity |

pip install zenml — start locally and scale up by swapping stacks, avoiding immediate Kubernetes dependency

|

Requires Kubernetes cluster plus Argo installation, RBAC config, artifact repository, and optional database for full value

|

| Learning Curve |

Python-first and ML-first — reduces cognitive load for ML engineers who don't want to become Kubernetes experts

|

Assumes Kubernetes fluency (CRDs, pods, namespaces, service accounts, storage) — ML teams often need platform help to adopt it

|

| Scalability |

Delegates compute to scalable backends — Kubernetes, Spark, cloud ML services — for unlimited horizontal scaling

|

Built for parallel job orchestration on Kubernetes with parallelism limits, retries, and workflow offloading for large DAGs

|

| Cost Model |

Open-source core is free — pay only for your own infrastructure, with optional managed cloud for enterprise features

|

Apache-2.0 and CNCF-governed with no per-seat or per-run pricing — costs are Kubernetes infrastructure and operations

|

| Collaboration |

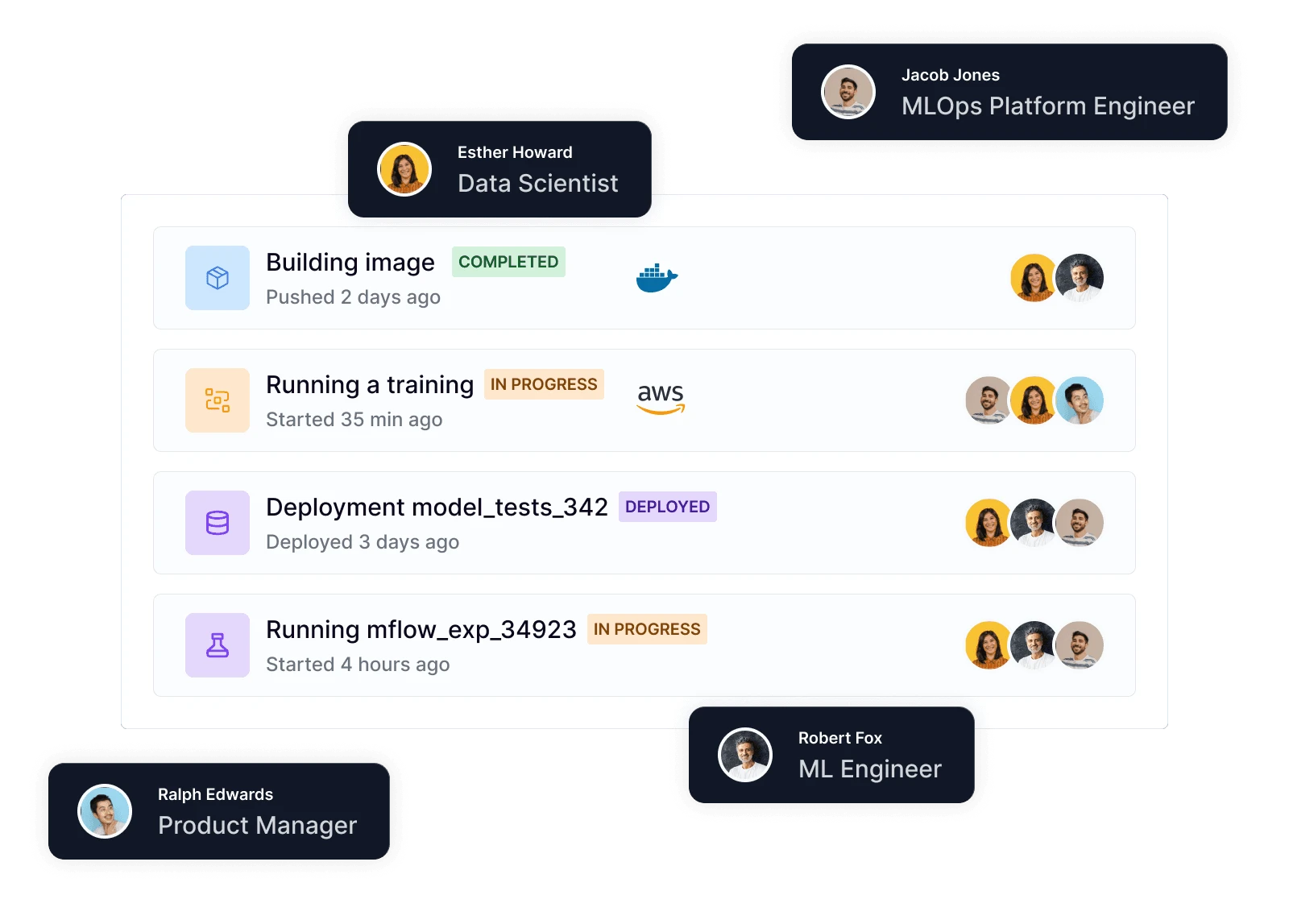

Code-native collaboration through Git, CI/CD, and code review — ZenML Pro adds RBAC, workspaces, and team dashboards

|

UI and SSO support for multi-user setups, but collaboration is centered on workflow execution and logs — not ML experiment sharing

|

| ML Frameworks |

Use any Python ML framework — TensorFlow, PyTorch, scikit-learn, XGBoost, LightGBM — with native materializers and tracking

|

Framework-agnostic at the runtime level — if it runs in a container on Kubernetes, Argo can orchestrate it

|

| Monitoring |

Integrates Evidently, WhyLogs, and other monitoring tools as stack components for automated drift detection and alerting

|

Monitors workflow execution well (statuses, logs, Prometheus metrics), but no production model monitoring or ML drift detection built in

|

| Governance |

ZenML Pro provides RBAC, SSO, workspaces, and audit trails — self-hosted option keeps all data in your own infrastructure

|

Kubernetes-centric governance with namespacing, RBAC, and workflow archiving — but ML-specific audit trails require external systems

|

| Experiment Tracking |

Native metadata tracking plus seamless integration with MLflow, Weights & Biases, Neptune, and Comet for rich experiment comparison

|

No built-in experiment tracking — teams embed MLflow or W&B inside containers and standardize conventions manually

|

| Reproducibility |

Automatic artifact versioning, code-to-Git linking, and containerized execution guarantee reproducible pipeline runs

|

Workflows are rerunnable, but reproducibility depends on pinned containers, data versioning, and discipline — not enforced by default

|

| Auto-Retraining |

Schedule pipelines via any orchestrator or use ZenML Pro event triggers for drift-based automated retraining workflows

|

CronWorkflows and webhook triggers enable automated retraining runs — model promotion and registry logic left to your stack

|