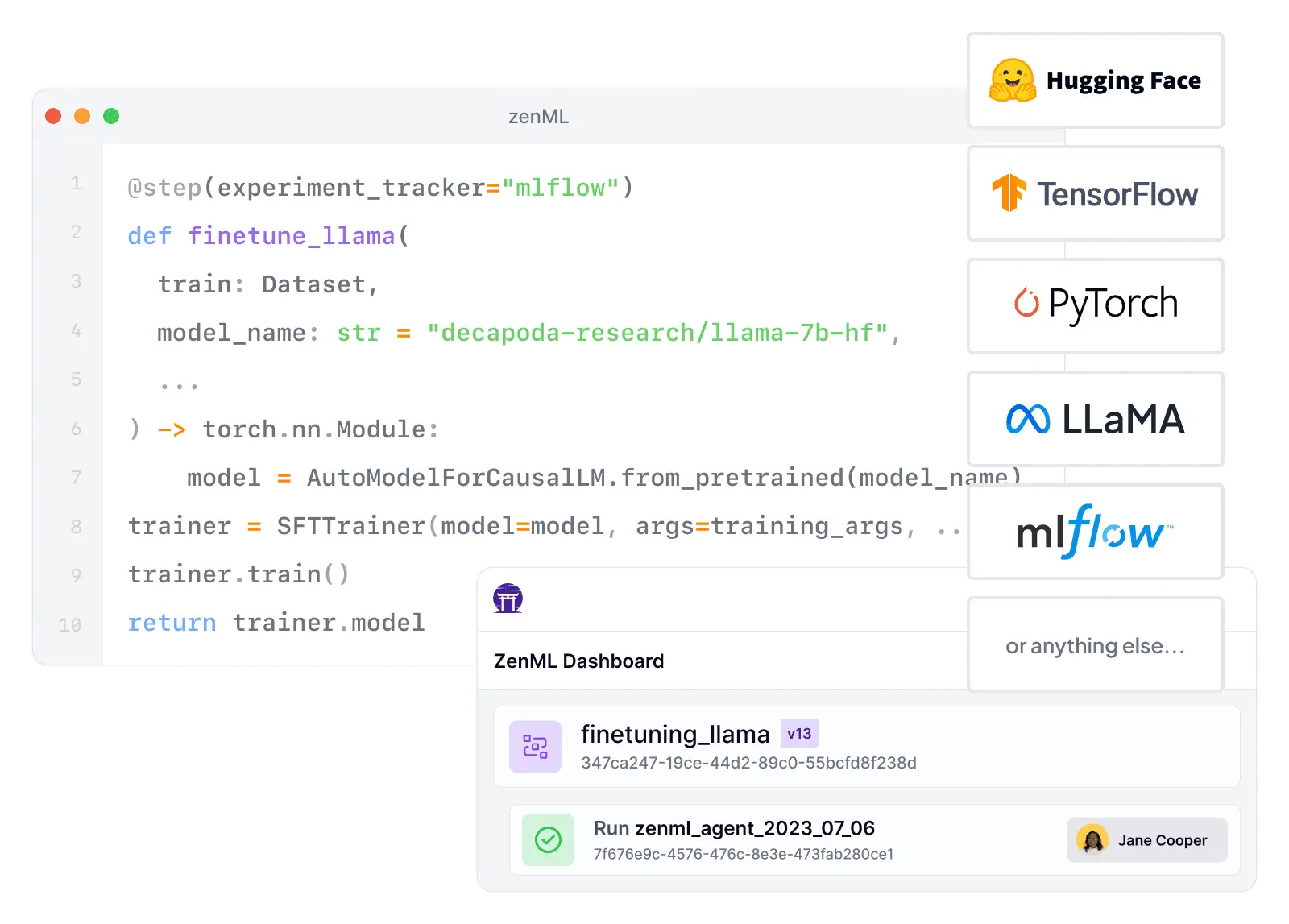

| Workflow Orchestration | ZenML is built for defining and running reproducible ML/AI pipelines end-to-end, with infrastructure abstracted behind swappable stacks. | Seldon Core focuses on model deployment and inference operations; it does not provide a native training/evaluation pipeline orchestrator. |

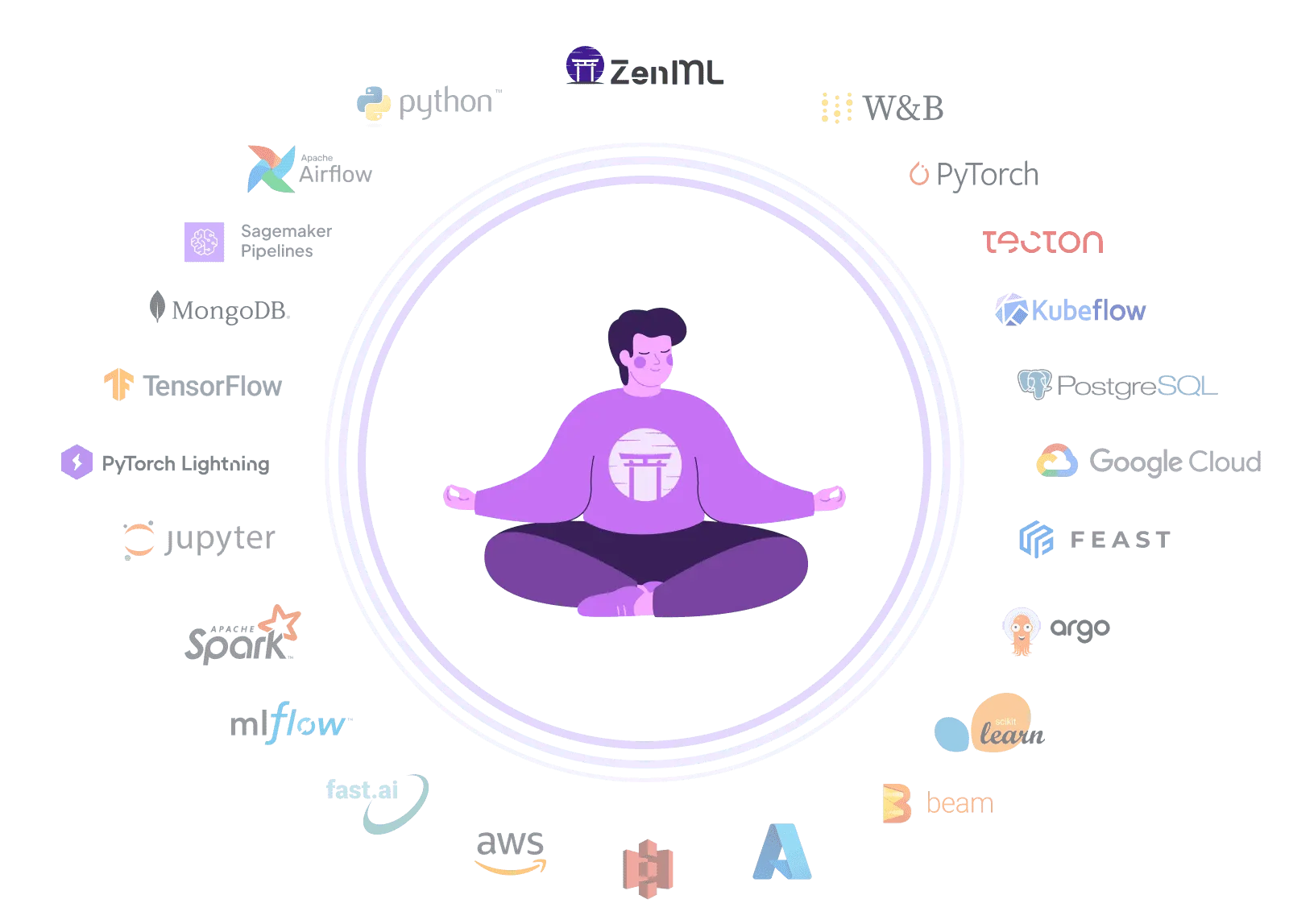

| Integration Flexibility | ZenML's composable stack architecture lets teams plug in different orchestrators, artifact stores, experiment trackers, and deployers without rewriting pipeline code. | Seldon Core supports multiple model frameworks and component styles (pre-packaged servers or language wrappers) and integrates deeply with the Kubernetes ecosystem. |

| Vendor Lock-In | ZenML is cloud-agnostic and designed to avoid lock-in by separating pipeline code from infrastructure backends. | Seldon Core is Kubernetes-native and production use requires a commercial license (BSL), increasing platform and vendor dependency. |

| Setup Complexity | ZenML can start locally and scale up by swapping stack components; teams can adopt orchestration and cloud components incrementally. | Seldon Core typically requires a Kubernetes cluster plus CRD/operator installation and gateway/ingress configuration, increasing time-to-first-value. |

| Learning Curve | ZenML's Python-native pipeline abstractions reduce friction for ML engineers who want to productionize workflows without becoming Kubernetes experts. | Seldon Core's primary abstractions (CRDs, gateways, inference graphs, rollout configs) are powerful but require Kubernetes and production serving knowledge. |

| Scalability | ZenML scales by delegating execution to orchestrators (Kubernetes, Airflow, Kubeflow, etc.) while keeping pipelines portable. | Seldon Core is built for high-scale production inference on Kubernetes and explicitly targets large-scale model deployment and operations. |

| Cost Model | ZenML offers a free OSS tier plus clearly published SaaS tiers, so teams can forecast cost as usage grows. | Seldon Core's production licensing is commercial (BSL for non-production only), and enterprise pricing is typically sales-led rather than self-serve. |

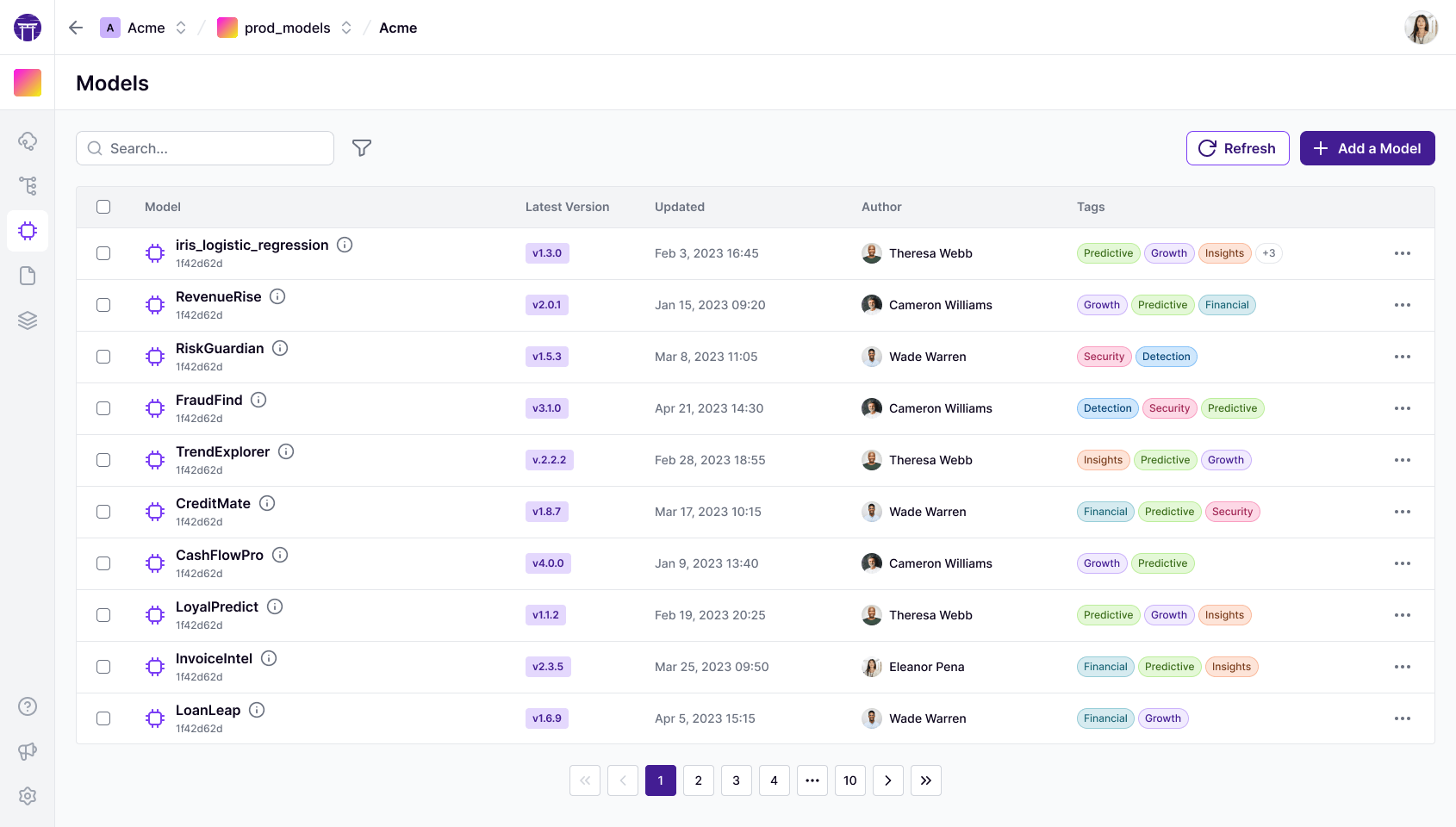

| Collaboration | ZenML Pro adds collaboration primitives like workspaces, RBAC/SSO, and UI-based control planes for artifacts and models. | Core itself is mainly an operator/runtime; richer collaboration and governance experiences are part of Seldon's commercial platform. |

| ML Frameworks | ZenML supports many ML/DL frameworks across the lifecycle by letting you compose training, evaluation, and deployment steps in Python. | Seldon Core supports serving models from multiple ML frameworks and languages via pre-packaged servers and language wrappers. |

| Monitoring | ZenML tracks artifacts, metadata, and lineage across pipeline runs so teams can diagnose issues and connect production behavior to training provenance. | Seldon Core provides advanced metrics, request logging, canaries/A-B tests, and outlier/explainer components for production inference monitoring. |

| Governance | ZenML provides lineage, metadata, and (in Pro) fine-grained RBAC/SSO that supports auditability and controlled promotion processes. | Core provides operational primitives, but governance (auditing, compliance controls) is part of Seldon's enterprise platform rather than the core runtime. |

| Experiment Tracking | ZenML can integrate with experiment trackers and also ties experiments to reproducible pipelines and versioned artifacts. | Seldon Core's 'experiments' are deployment experimentation/rollouts, not offline experiment tracking of runs, params, and metrics. |

| Reproducibility | ZenML emphasizes reproducibility via artifact/version tracking, metadata, and pipeline snapshots that help recreate environments and results. | Seldon Core makes deployments repeatable via Kubernetes resources, but doesn't natively reproduce the upstream training pipeline, datasets, and evaluation context. |

| Auto-Retraining | ZenML is designed to automate retraining and promotion by scheduling pipelines, triggering on events, and integrating CI/CD-style checks. | Seldon Core can host and monitor models, but automated retraining typically requires an external orchestrator or pipeline system. |